Article

Feb 16, 2026

The Hidden Risks of AI Transformation in PE Portfolio Companies

PE returns now rely on operations, but AI pilots are failing. Here are the 5 hidden risks blocking value in portfolio companies.

Private equity is under more pressure to create operational value than it has been in years.

According to McKinsey's Global Private Markets Report, the average holding period for buyout assets has stretched to 6.7 years, the longest since 2005, and more than 18,000 portfolio companies are sitting beyond the traditional four-year exit window. Multiple expansion is no longer carrying returns. The firms that perform from here will do it through operations, and AI transformation is the most obvious lever available.

The problem is that most portfolio companies adopting AI are not seeing returns from it. They are running pilots, buying tools, and checking boxes on value creation plans without building the operational discipline required to make any of it work. The risks in this gap are specific, measurable, and largely avoidable once you know where to look. This post covers the ones we see most often.

AI Adoption in PE Is High, but Transformation Is Not

What "High Adoption, Low Transformation" Looks Like in Practice

AI activity across PE portfolios is significant. According to Bain's 2025 Global Private Equity Report, a majority of portfolio companies are in some phase of generative AI testing or development. That sounds encouraging until you look at what "some phase" actually means. Only about 20% of those companies have moved AI use cases into production and are seeing concrete results. The rest are still experimenting.

This pattern holds across the broader enterprise landscape, too. Ropes & Gray's quarterly AI report noted that despite $30 to $40 billion in enterprise GenAI investment, the vast majority of companies have seen little to no P&L impact from AI so far. In PE specifically, 78% of AI-related deals in Q3 2025 were add-ons, which suggests that firms are buying capabilities rather than building them internally, and often bolting them onto portfolio companies without a clear integration plan.

Meanwhile, a PitchBook survey of PE investors found that 22% of respondents flagged rapid AI adoption as a risk to their portfolio companies' business models. That concern is worth paying attention to, because it signals that the industry is starting to recognize the gap between activity and outcomes.

Why the Shift from Multiple Expansion to Operational Improvement Raises the Stakes

For the past decade, PE returns leaned heavily on financial engineering and favorable entry multiples. That environment has changed. Interest rates are higher, entry multiples remain elevated, and the primary return driver has shifted to operational improvement. AI is supposed to be the tool that delivers that improvement, but when it is adopted without structure, it becomes another line item that is hard to justify at exit.

The firms that will benefit from AI transformation are the ones treating it as an operational change, not a technology purchase. Understanding what AI transformation actually looks like in practice is the starting point for that shift.

Where Does AI Transformation Go Wrong in Portfolio Companies?

Misaligned Use Cases: Spending on Sales Pilots Instead of Operations

One of the most common mistakes we see is portfolio companies investing AI budgets in sales and marketing pilots. These are visible, easy to pitch to the board, and feel productive. But the data consistently shows that AI delivers higher returns in back-office operations: compliance, finance, document processing, and repetitive administrative workflows.

The reason is straightforward. Sales and marketing require judgment, relationship context, and human nuance that current AI handles poorly. Back-office operations involve structured, repetitive tasks with clear inputs and outputs, which is exactly what AI is good at. When portfolio companies spend months building a sales chatbot that underperforms while ignoring the finance team that manually reconciles reports across three systems, the AI initiative looks like it failed. In reality, it was just pointed at the wrong problem.

Understanding the hidden costs that cause AI projects to fail is useful here, because the misalignment between use case and investment usually shows up as a cost problem before it shows up as a performance problem.

Decentralized AI Strategies That Don't Scale Across the Portfolio

FTI Consulting's AI Radar for Private Equity found that 40% of PE firms are managing AI investments at the individual portfolio company level with no centralized coordination. Each company picks its own tools, runs its own pilots, and builds its own internal expertise from scratch.

This decentralized model creates several compounding problems. Learnings from one portfolio company never reach the others. Each company negotiates its own vendor contracts, often paying more for less. Security and governance standards vary across the portfolio, which creates compliance risk that the GP may not see until it surfaces during due diligence or an audit. And the talent required to run AI projects well is scarce enough that asking each portfolio company to find and retain it independently is impractical for most mid-market firms.

The part people miss is that the cost of decentralization is not just inefficiency. It is also speed. Firms running centralized AI programs consistently move from pilot to production faster, because they are not rebuilding the same foundational work at every company. Knowing the difference between AI agents and AI tools at the firm level, for example, prevents each portfolio company from making that evaluation independently and arriving at different conclusions.

Is Shadow AI a Real Threat to PE-Backed Companies?

The Governance Gap Between Adoption and Oversight

Shadow AI is the use of AI tools by employees without IT approval, security review, or compliance oversight. It is the AI equivalent of shadow IT, but with higher stakes because the tools involved process, store, and sometimes learn from the data fed into them.

The scale of the problem is significant. A 2025 survey of enterprise IT leaders found that 90% of organizations with over 1,000 employees are concerned about shadow AI from a privacy and security standpoint, and nearly 80% have already experienced negative AI-related data incidents. This is not a theoretical risk. It is already happening in most large organizations, including PE-backed ones.

The dynamic that drives it is predictable. Employees are under pressure to use AI to improve productivity. Approved enterprise tools are either unavailable, too slow to procure, or too limited for what people need. So employees use personal accounts on tools like ChatGPT or Claude, paste in company data, and get their work done. The work improves. The risk accumulates silently.

Data Exposure, Compliance Risk, and the Cost of Unmanaged AI

The financial consequences of shadow AI are becoming clearer. IBM's 2025 Cost of Data Breach Report found that shadow AI incidents now account for 20% of all data breaches, and they carry a cost premium, $4.63 million per breach versus $3.96 million for standard breaches. For portfolio companies in regulated industries like financial services or healthcare, a single breach tied to unauthorized AI use can trigger compliance investigations that delay or derail an exit.

PE firms that want to manage this risk need to start with visibility. You cannot govern what you cannot see, and most firms do not have a reliable inventory of the AI tools their portfolio companies are actually using. We have written specifically about adopting AI without creating data security risk, and the approach starts with mapping what already exists before adding anything new.

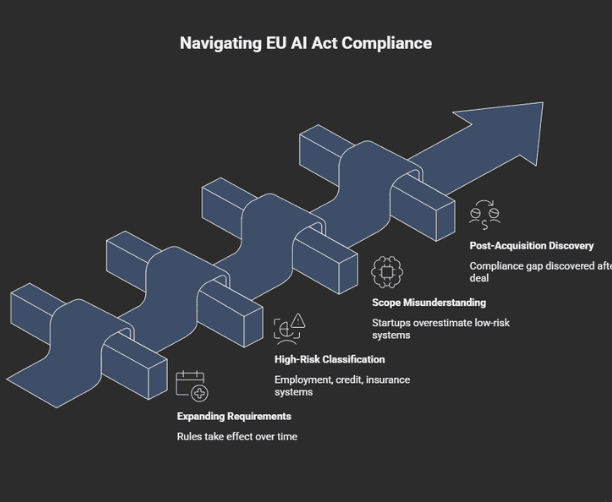

How Does the EU AI Act Change the Risk Profile for Portfolio Companies?

High-Risk Classification and Compliance Timelines

The EU AI Act is the first major regulatory framework for artificial intelligence, and its requirements are expanding on a defined timeline. The rules on prohibited AI practices took effect in February 2025. Requirements for general-purpose AI models became effective in August 2025. The high-risk AI system obligations, which carry the heaviest compliance burden, take effect in August 2026 and August 2027.

For PE portfolio companies, the high-risk category is the one that matters most. AI systems used in employment decisions, credit scoring, insurance risk assessment, and certain financial services applications all fall into this classification. These systems require conformity assessments, detailed technical documentation, ongoing monitoring, and human oversight mechanisms. Non-compliance penalties reach up to €35 million or 7% of global annual turnover, whichever is higher.

The part that catches firms off guard is scope. About 33% of AI startups estimate that their systems qualify as high-risk under the Act, which is significantly higher than the 5-15% that regulators initially projected. Portfolio companies that have been adopting AI tools without assessing their regulatory classification may find themselves with compliance obligations they did not plan or budget for.

Why Regulatory Due Diligence Needs to Start Before Acquisition Closes

For PE firms evaluating targets, AI regulatory exposure should be part of the diligence process, not something discovered post-acquisition. The cost of bringing a non-compliant AI system into compliance can add 12 to 18 months to a product's market entry timeline and require significant technical and legal resources.

This applies both to companies being acquired for their AI capabilities and to companies that have adopted AI tools operationally. In either case, the GP needs to understand what AI systems are in use, how they are classified under emerging regulations, and what the compliance gap looks like before the deal closes.

Our PE due diligence tool is built for this kind of pre-acquisition assessment, surfacing publicly available data on a target company's financials, leadership, and market presence so that firms can begin analysis before formal diligence starts. And for portfolio companies already in the fold, AI-powered due diligence can help firms assess regulatory exposure across the portfolio without assembling a new team for each company.

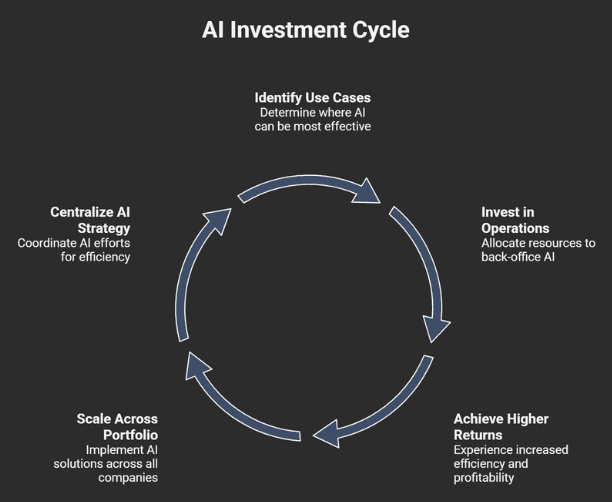

What Do PE Firms That Get AI Transformation Right Actually Do?

Centralized AI Governance Across the Portfolio

The firms seeing measurable returns from AI tend to share a structural characteristic: they manage AI strategy at the firm level rather than leaving it to each portfolio company independently. Bain's research highlights two models that work. Vista Equity Partners has built an internal team dedicated to helping its 85-plus portfolio companies apply AI, with required annual AI goals and shared learning through a CEO council. Apollo Global Management has taken a different approach, building a center of excellence staffed with external AI experts who develop playbooks and diagnostic tools that portfolio companies can adopt.

Both models solve the same problem. They prevent each portfolio company from starting from zero, and they create a feedback loop where what works at one company can be applied across the portfolio. EY's Q4 AI Pulse report found that PE firms report higher focus on responsible AI (82%), greater attention to AI risks (74%), and more transparency with customers (74%) compared to the broader market. The firms taking governance seriously are also the ones seeing returns.

For firms that want to benchmark where PE firms are creating value with AI, the patterns are consistent: due diligence acceleration, portfolio monitoring, back-office automation, and compliance. The common thread is that these are all workflow problems, not feature requests.

Workflow-First Implementation Over Feature-First Pilots

McKinsey's 2025 State of AI survey found that out of 25 organizational attributes tested, the redesign of workflows had the biggest effect on a company's ability to see EBIT impact from AI. Not the quality of the model. Not the size of the investment. The willingness to change how work actually gets done.

This finding aligns with what we consistently observe. Portfolio companies that start by mapping their existing workflows, identifying where time and money are being lost, and then applying AI to those specific points produce faster and more measurable results than companies that start by evaluating AI tools and looking for places to use them.

A workflow-first approach to AI implementation changes the sequence. Instead of asking "what can AI do?", it asks "what is this business spending too much time and money on, and can AI reduce that?" The answer is usually yes, and it is usually in operations, not in the places that get the most attention.

Our AI ROI calculator can help firms estimate the financial impact of applying AI to specific workflows before committing to a full implementation, and our AI business audit tool identifies the highest-value opportunities across a business so that investment goes to the right places first.

The Risk Is Not AI Itself

The risk in AI transformation for PE portfolio companies is not that the technology does not work. It is that the way most companies adopt it, without governance, without workflow alignment, and without centralized coordination, turns a powerful tool into an expensive experiment. The firms producing measurable returns are the ones that treat AI as an operational discipline, focus on back-office workflows where ROI is clearest, and bring in partners who understand how enterprise operations actually run.

If you are evaluating where your portfolio companies stand on AI readiness, or where the risks and opportunities are across your holdings, that is what an AI transformation partner actually does. Book a consultation to start the assessment.