Article

Feb 13, 2026

How Private Equity Firms Can Adopt AI Without Creating Data Security Risk

How private equity firms can deploy AI securely while protecting sensitive deal, investor, and portfolio data.

AI can make meaningful improvements across the PE deal lifecycle. But the same data that makes AI useful in private equity is the data that makes PE firms attractive to attackers. A Kroll study of 325 PE executives released in February 2026 found that the average financial impact per cyber incident in private equity is $2.1 million, with 68% of firms reporting that incidents are increasing during the hold period.

This article walks through the specific data security risks AI introduces in private equity and provides a practical framework for adopting AI without exposing deal data, investor information, or portfolio operations to risks that affect valuations and exits.

Why PE Firms Face Elevated AI Data Security Risks

Private equity firms are structurally different from most enterprises when it comes to data security, and those differences matter when AI enters the picture.

The concentration of high-value data

A typical mid-market PE firm manages confidential information across dozens of portfolio companies, hundreds of investors, and thousands of transactions including financial statements, tax records, LP PII, proprietary deal models, and legal documents under NDA. This data directly affects deal pricing, competitive positioning, and regulatory standing.

When AI tools process this data for due diligence analysis, document review, or financial modeling it gets sent to APIs, stored in cloud environments, and in many cases logged by third-party vendors whose data retention policies may not align with the confidentiality requirements PE firms operate under.

How AI expands the attack surface

Traditional cybersecurity in PE focused on email security, network access, and endpoint protection. AI introduces a different category of risk. Every time a team member pastes a confidential information memorandum into a general-purpose AI tool, or connects an AI analytics platform to portfolio company financials, there is a new pathway for data to leave the firm's control.

Microsoft's 2026 Data Security Index found that 32% of data security incidents now involve generative AI tools. For PE firms, where a single breach can reduce valuations, delay exits, and trigger LP lawsuits, that number carries significant weight.

The risk is not theoretical. A Canadian PE firm experienced a breach after an investor communications vendor failed to patch a file transfer vulnerability. A U.S. firm had over 7,800 individuals' personal data stolen through a ransomware attack originating via a portfolio healthcare company. In both cases, the PE firm bore the reputational and financial consequences.

What Actually Goes Wrong When PE Firms Adopt AI Without a Security Plan

Most AI-related security incidents in private equity do not start with a sophisticated cyberattack. They start with convenience.

Sensitive data flowing through unsecured AI platforms

The most common failure pattern is straightforward: an associate uploads a confidential deal document to a general-purpose AI tool for a quick summary. The vendor's terms may allow that data to be used for model training. Even if they don't, the data is now stored on infrastructure the firm does not control.

A study of mid-market PE and VC firms found that 95% are using AI in investment deals, but one in three have no formal policy governing that use. The gap between adoption and governance is where most breaches happen.

Shadow AI across the firm

Shadow AI is the PE-specific version of shadow IT. It happens when team members use AI tools that have not been vetted or configured by the firm's compliance function. In PE, this is common because firms are small, move fast, and often lack dedicated IT security teams.

An analyst might use one AI tool for document extraction, another for financial modeling, and a third for market research — none reviewed for data handling. Each creates a potential exfiltration point, and because these tools are used informally, the firm has no audit trail showing what data was shared or with which vendor.

The problem compounds at the portfolio level. If a PE firm encourages AI adoption across portfolio companies without establishing baseline security requirements, it inherits the data risk of every tool those companies adopt.

How Should PE Firms Evaluate AI Vendors for Data Security?

Vendor evaluation is where most PE firms can make the biggest immediate impact on their AI security posture. The challenge is that standard vendor assessments were not designed for AI-specific risks.

Questions that matter before signing an AI vendor contract

Traditional vendor due diligence focuses on SOC 2 reports, data encryption, and uptime SLAs. These remain important, but they do not address AI-specific risks. PE firms should ask a different set of questions alongside the standard ones.

Where is the data processed and stored? Many AI tools route data through multiple jurisdictions, which has direct implications under GDPR and the EU AI Act for firms with European LPs. Does the vendor retain input data, and for how long? Some platforms store user inputs to improve their models, meaning proprietary deal information may persist in environments the firm cannot audit. Can the firm deploy the tool within its own infrastructure? On-premises or private cloud deployment eliminates the risk of data leaving the firm's environment, which is often the right choice for due diligence and deal evaluation. What happens to the data if the vendor relationship ends? Data portability and deletion guarantees matter, including confirmation that the vendor purges all data from backups and training derivatives.

These questions should be standardized into vendor onboarding, not treated as a one-time exercise.

The difference between compliance certifications and actual security

A SOC 2 Type II report tells you that a vendor's controls were audited. It does not tell you whether those controls are adequate for the specific sensitivity of PE deal data.

PE firms should look beyond certifications and ask for evidence of data isolation between customers, encryption at rest and in transit, incident response timelines, and contractual breach notification windows. For firms evaluating AI in private equity due diligence, this level of vendor scrutiny directly affects the integrity of the analysis and the firm's liability if something goes wrong.

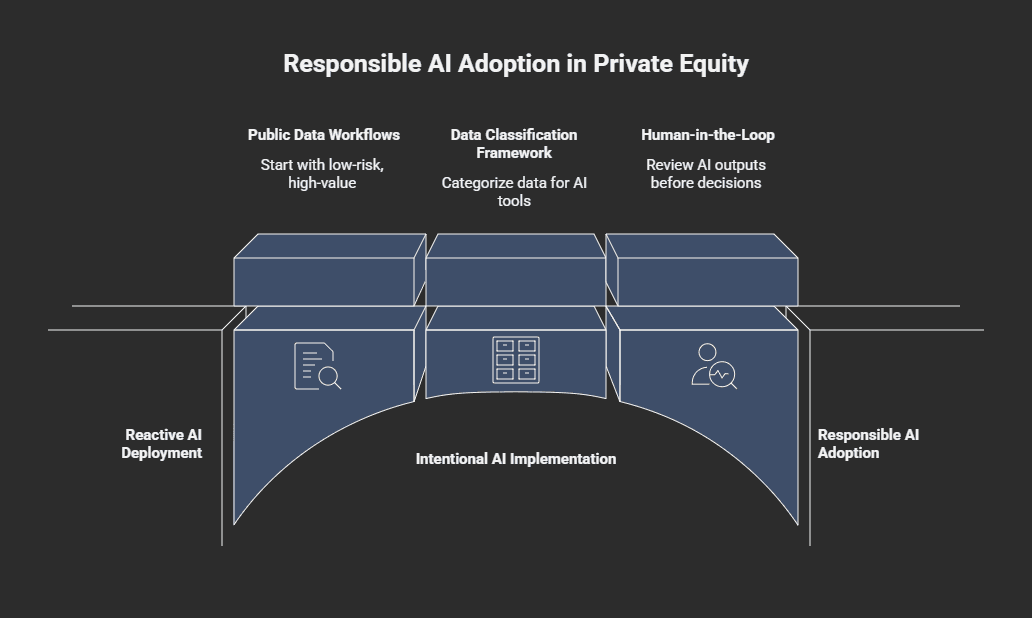

A Practical Framework for Secure AI Adoption in Private Equity

The firms that adopt AI successfully in private equity do not start with the most complex or sensitive use case. They start with a structured approach that limits risk exposure while building internal confidence and capability.

Start with low-risk, high-value workflows

Not every AI use case requires access to confidential deal data. Some of the highest value applications involve publicly available information: market research, competitive analysis, news monitoring, and initial target screening. These workflows deliver measurable time savings without exposing sensitive data.

If your firm is exploring where AI fits across the deal lifecycle, our PE due diligence tool can help you analyze publicly available company data before formal diligence begins without requiring access to proprietary information.

The progression should be intentional: public data workflows first, then internal operational workflows like portfolio reporting, then deal sensitive data with appropriate controls in place.

Build data classification before you build AI pipelines

PE firms that skip data classification before deploying AI almost always end up in a reactive position. Without clear categories, there is no basis for determining which AI tools are appropriate for which workflows.

A practical framework does not need to be elaborate. Four tiers work for most PE firms. Public data, market research, published financials, news can flow through most AI tools with minimal risk. Internal data, meeting notes, operational reports is suitable for vetted platforms with data handling agreements. Confidential data, deal documents, LP information, portfolio financials should only be processed by AI deployed within the firm's own infrastructure or by vendors with strict data isolation. Restricted data, material nonpublic information, legal privileged communications should generally not be processed by third-party AI tools at all.

This classification becomes the foundation for every AI decision. When someone proposes a new tool, the first question is: what classification of data will it access?

Use human-in-the-loop for anything touching deal data

In private equity, human-in-the-loop is a practical risk management control, not just a compliance concept. It ensures AI outputs are reviewed before they influence investment decisions or reach external parties.

This means AI generated analysis of deal data is always reviewed by someone with the domain expertise to evaluate both accuracy and data sensitivity. It applies to due diligence summaries, red flag reports, financial model outputs, and any communication incorporating AI-derived insights.

The approach also creates an audit trail demonstrating appropriate oversight something that matters increasingly as regulators examine AI in investment decision-making. Firms exploring AI use cases reshaping private equity should treat human-in-the-loop as the mechanism that makes responsible adoption possible.

Does AI Governance Slow Down Deal Execution?

This is the objection that comes up most frequently when governance is introduced into AI adoption conversations at PE firms. The concern is understandable as PE operates on tight timelines, and anything that adds friction to the deal process feels like a competitive disadvantage.

Why governance actually accelerates AI adoption

Firms that implement AI governance early tend to move faster when deploying new capabilities. Without governance, every new AI tool requires ad hoc evaluation teams debate whether it is safe, what data can be shared, and who is responsible if something goes wrong.

With a framework in place, these questions are already answered. The firm has pre-approved tools for each data tier, standard vendor contract language, and clear escalation paths. This eliminates the inconsistent decision-making that slows teams down.

What a lightweight governance structure looks like for PE

AI governance for a PE firm does not require a dedicated team or multi-year implementation. A practical structure includes four components: an AI use policy defining approved tools and data access levels; a vendor evaluation process incorporating AI-specific questions triggered by any new tool proposal; a data classification framework mapped to existing workflows; and a quarterly review cadence for reassessing tools, evaluating new options, and updating policies based on regulatory changes.

This can be implemented in weeks and does not require specialized AI expertise. It requires someone at the firm who owns it, typically a COO, compliance officer, or operations lead and ensures it stays current.

For firms building broader AI implementation strategies, data security governance should be integrated from the beginning rather than layered on after deployment.

What PE Firms Should Do Before the Regulatory Window Closes

The regulatory environment around AI and data security is moving faster than most PE firms realize. Firms that wait for finalized regulations before acting will find themselves retrofitting compliance into systems that were not designed for it.

EU AI Act, SEC priorities, and state-level laws converging in 2026

The EU AI Act's full enforcement for high-risk systems begins in August 2026, with penalties reaching up to 7% of global annual turnover. For PE firms with European LPs or cross-border deal activity, high-risk AI systems require documented risk assessments, audit trails, and demonstrated human oversight.

In the U.S., the SEC has made AI-related risk disclosure and AI washing a top enforcement priority for 2026. Colorado's Algorithmic Accountability Law took effect in February 2026, and California has multiple AI transparency laws scheduled for this year. The regulatory landscape is fragmented, but the direction is consistent: firms using AI on sensitive data will be expected to demonstrate governance and accountability.

How compliance becomes a competitive advantage at exit

Buyers and secondary market participants are increasingly evaluating AI governance as part of due diligence. A firm that can demonstrate structured AI governance like approved tools, data classification, audit trails, vendor management, presents less risk than one that cannot.

This applies at the portfolio level as well. PE firms that establish baseline AI governance across portfolio companies and can demonstrate compliance at exit position those companies for stronger valuations. Understanding the hidden costs that cause AI projects to fail is part of this equation, the cost of governance now is a fraction of the cost of a breach or regulatory action later.

Frequently Asked Questions

Can PE firms use general purpose AI tools like ChatGPT for deal work?

Not for confidential data. General purpose tools may retain inputs and lack the data isolation PE requires. Use them for public information only, and deploy enterprise or self hosted AI for anything involving deal documents, LP data, or portfolio financials.

What is the biggest AI data security risk specific to private equity?

Uncontrolled data flowing through unvetted third-party AI tools. PE firms are lean on IT oversight, and team members often adopt AI informally. Without a use policy and vendor evaluation process, sensitive deal data ends up in environments the firm cannot monitor or control.

How long does it take to implement AI governance at a PE firm?

A practical governance framework, use policy, vendor process, data classification, review cadence, can be implemented in two to four weeks. It does not require specialized AI expertise or dedicated headcount, just clear ownership and consistent follow through.

Does the EU AI Act apply to U.S.-based PE firms?

Yes, if the firm has European LPs, portfolio companies in the EU, or processes data of EU residents. High-risk AI systems require documented risk assessments and human oversight. Non-compliance penalties reach up to 7% of global annual turnover.

Should PE firms require AI governance standards across portfolio companies?

Yes. Portfolio company data risk flows upstream to the fund. Establishing baseline AI governance standards across the portfolio reduces inherited risk and strengthens valuations at exit by demonstrating structured oversight to buyers.

Where to Start

Classify your data know what you have, where it lives, and how sensitive it is. Audit your current AI exposure across the firm and portfolio companies. Establish a governance structure proportional to your firm's size that is clear, owned by someone specific, and reviewed regularly.

If you want to see where AI can add value before committing to infrastructure, Novoslo's AI business audit tool provides a structured assessment of opportunities with implementation recommendations tailored to your business.

The firms that move thoughtfully with clear data boundaries, vetted tools, and proportional governance will capture AI's operational advantages without inheriting the risks that come from moving without a plan.