Article

Feb 4, 2026

A CEOs Guide for Leading AI Transformation Successfully in 2026

Learn how CEOs can lead AI transformation by aligning strategy, leadership, and culture to build future-ready, competitive organizations.

Here's a number worth sitting with: 95% of enterprise AI pilots fail to deliver measurable business impact.

That finding comes from MIT's 2025 State of AI in Business report. BCG's research lands in the same territory. 74% of companies struggle to achieve and sustain value from AI. And according to recent market research, 42% of companies abandoned most of their AI initiatives in 2025, up sharply from just 17% the year before.

These aren't pilot programs failing because the technology doesn't work. The models are better than ever. The failure is happening at the leadership level. CEOs are either delegating AI to IT teams who lack strategic mandate, or they're chasing tools without asking what business problem they're actually solving.

The CEOs who get this right are pulling ahead fast. They're not treating AI as a technology project. They're treating it as a leadership challenge, one that requires a different way of thinking about vision, risk, culture, and execution.

This guide gives you a practical framework for leading AI transformation. Just what actually works based on what's happening inside organizations right now.

Understanding AI Leadership

The Importance of AI-First Leadership

AI-first leadership doesn't mean being a technologist. It means treating AI as a core business capability not an experiment, not a side project, not something to delegate entirely.

When Microsoft CEO Satya Nadella restructured his responsibilities in late 2025, he did it specifically to increase his focus on AI. His reasoning was direct: Microsoft's continued success depends on equipping customers with AI capabilities. He's been CEO for over a decade. Shares have risen 11-fold under his leadership. And he still saw AI mastery as non-negotiable for staying relevant.

That's the bar. If a CEO leading a $3 trillion company thinks AI requires more of his personal attention, it probably requires more of yours.

AI-first leadership means setting the vision for how AI will change your business and taking personal accountability for whether it delivers. It means making AI a standing agenda item at the board level, not just a quarterly technology update. It means asking hard questions about data, workflows, and organizational readiness before signing off on tools.

The World Economic Forum puts it simply: CEOs set the AI vision for the company. CIOs and CTOs build the processes that realize that vision. But the vision has to come from the top, and it has to be specific enough to guide execution.

Traits of Effective AI Leaders

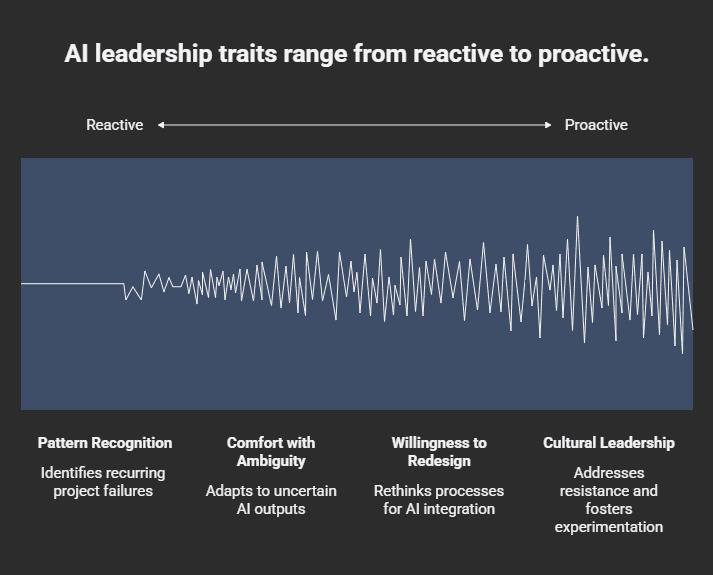

The CEOs who are succeeding with AI share a few common traits. None of them are technical.

Pattern recognition over technical depth. You don't need to understand how transformer models work. You need to recognize when your organization is repeating the same mistakes that kill AI projects like starting with tools instead of problems, under investing in data infrastructure, treating AI like a one-time project instead of an iterative capability.

Comfort with ambiguity. AI introduces uncertainty that traditional IT projects don't. Outputs can vary. Models drift. Results take time to compound. Effective AI leaders don't wait for certainty. They build systems that learn and adapt.

Willingness to redesign workflows. IBM's 2025 CEO Study found that organizations seeing real returns from AI aren't just adding automation to existing processes. They're fundamentally rethinking how work gets done.

Cultural leadership. AI transformation fails more often from resistance than from technology. Employees worry about job security. Middle managers protect their territory. Effective AI leaders address this head-on, communicating why AI matters, involving employees in redesigning their own workflows, and creating space for experimentation.

Your role isn't to be the smartest person in the room about AI. Your role is to create the conditions where AI can actually deliver value.

Assessing and Building AI Readiness

Evaluating Your Leadership Team's AI Readiness

Before you can lead AI transformation, you need to know whether your leadership team is ready to execute it.

Research from Russell Reynolds found that only 32% of leaders feel confident they have the right skills to implement AI in their organizations. That gap isn't just about technical knowledge. It's about the mindset whether your C-suite can evolve their ways of working, embrace experimentation, and lead through uncertainty.

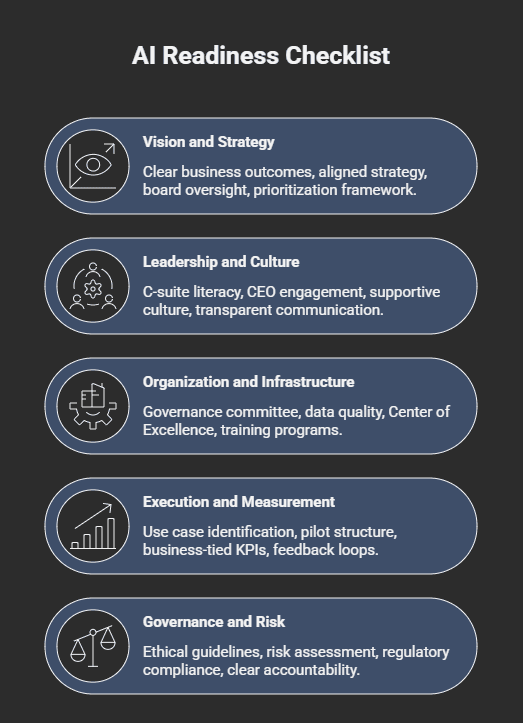

Here's a practical assessment framework:

Strategic clarity. Can your leadership team articulate how AI connects to your business strategy? Not "AI will make us more efficient," but specific outcomes tied to revenue, cost, or competitive advantage.

Data maturity. Does your CIO or CDO have a clear picture of your data quality, accessibility, and governance? 64% of organizations cite data quality as their top barrier to AI success. If your leadership team can't answer basic questions about data readiness, that's a red flag.

Risk appetite. Is your team comfortable with the iteration and occasional failure that AI requires? Or do they expect AI projects to deliver predictable outcomes on fixed timelines?

Cultural capacity. Can your CHRO and line leaders manage the change management that AI requires? This includes training, communication, and workforce redesign.

If you find gaps, that's not a reason to delay. It's a reason to build capability intentionally. If you want a structured way to evaluate where AI fits in your business, our AI business audit tool can help identify opportunities across your operations.

Developing AI Literacy Among Executives

AI literacy doesn't mean your CFO needs to take a machine learning course. It means your leadership team understands enough to make good decisions about what AI can and can't do, where it creates value, what it requires to work.

Practical steps:

Executive briefings, not training programs: Bring in practitioners who can show real examples from your industry. Abstract education doesn't stick. Concrete use cases do.

Hands-on exposure: Have your leadership team actually use AI tools. Not demos but real work. Summarize a document. Analyze a dataset. Draft an email. The understanding that comes from use is different from the understanding that comes from presentations.

Cross-functional dialogue: Create forums where business leaders and technical leaders can work through AI opportunities together. The best AI ideas rarely come from either group in isolation.

External pattern recognition: Study what's working at companies similar to yours. Our collection of real-world AI transformation examples can help you see patterns you might apply.

The goal isn't to turn your leadership team into technologists. It's to give them enough literacy to ask the right questions and make informed tradeoffs.

Creating a Vision and Strategy for AI

Defining Clear Objectives

AI initiatives fail when they start with "we should use AI" instead of "we need to solve this problem."

The most successful AI strategies start with business outcomes, not technology.

What are you trying to achieve?

Reduce operational costs?

Improve customer experience?

Accelerate decision-making?

Enter new markets?

Once you're clear on outcomes, work backward to AI. Google Cloud's framework suggests a dual approach: top-down strategic priorities connected to bottom-up feedback from teams who understand where work actually breaks down.

The questions that matter:

What outcomes are we trying to deliver? Be specific. "Improve efficiency" isn't a strategy. "Reduce order processing time by 40%" is.

Which teams are ready to use AI? Do they have the data, the skills, and the willingness to change their workflows?

Where do we have the right data? AI without quality data is expensive guesswork.

What are the second-order effects? How will AI change your business model, your customer relationships, your competitive position?

If you can't answer these questions, you're not ready to invest. If you can, you're ready to prioritize.

Balancing AI Ambitions with Realistic Expectations

Most AI projects fail not because they aim too high, but because they're poorly scoped.

A practical prioritization framework uses two dimensions: business value and implementation feasibility.

High value, high feasibility: Start here. These are your quick wins often using AI features already embedded in your existing platforms. According to RSM's research, many organizations don't realize that AI is already built into their ERP, CRM, and productivity tools. An audit of what you already have can unlock value without new procurement.

High value, low feasibility: These are your strategic bets. They require more investment in data infrastructure, workflow redesign, or organizational change. Plan for them, but don't start here.

Low value, high feasibility: Be careful. These are the projects that feel easy but don't move the needle. They consume resources and create the illusion of progress.

Low value, low feasibility: Don't do these.

The CEOs getting results understand that what AI transformation actually means is not about deploying the most advanced models. It's about solving the most valuable problems with the right level of technology. Understanding the difference between AI agents and AI tools can help you avoid wasting money on the wrong solution.

Building an AI-Ready Organization

Investing in AI Talent and Infrastructure

You don't need to hire an army of data scientists to succeed with AI. What you need is the right structure.

Most organizations benefit from a hybrid model: a small central team that owns platforms, patterns, and governance, combined with embedded teams in business units who understand domain-specific use cases.

McKinsey took this approach to an extreme deploying over 25,000 AI agents to reach a 1:1 human-to-agent ratio. You don't need that scale, but the principle holds. See what McKinsey's AI workforce means for your business.

WWT's research calls this the "AI Center of Excellence" model. The central team provides:

Reusable infrastructure and tooling

Governance frameworks and risk management

Vendor management and technology standards

Training and enablement resources

Business unit teams provide:

Deep understanding of workflows and pain points

Domain expertise to validate AI outputs

Change management within their functions

Feedback loops to improve models over time

On infrastructure, the critical investment is data not AI tools. Companies with strong data integration achieve 10.3x ROI from AI initiatives versus 3.7x for those with poor data connectivity. Before you buy another AI platform, ask whether your data is clean, accessible, and governed.

If you're in finance, our guide on AI transformation in financial operations covers the specific considerations for that function.

Understanding the hidden costs that cause AI projects to fail can help you budget realistically.

Cultivating a Culture of Innovation

AI transformation requires cultural change. People need permission to experiment, fail, and learn.

Microsoft's Chief People Officer put it directly: "You have to be okay with failure. You have to be okay with being messy. We're talking about the entry point of this transformation."

That doesn't mean chaos. It means structured experimentation with clear guardrails. Practical approaches:

Create safe spaces for pilots. Define bounded experiments where failure is expected and learning is the goal. Share results, including failures across the organization.

Celebrate learning, not just outcomes. If a pilot fails but generates useful insights, treat that as success. The alternative is a culture where people hide failures and repeat mistakes.

Pair technical and business experts. Don't build AI for people, build it with them. When employees help design AI solutions for their own workflows, adoption is dramatically higher.

Address fear directly. Employees worry about AI replacing their jobs. Acknowledge this. Be honest about what will change. Emphasize that the goal is augmentation, freeing people for higher-value work, and follow through on that promise.

One enterprise sales team we worked with doubled their efficiency using AI-driven insights after redesigning their lead engagement workflow. The technology mattered, but what made it work was involving the sales team in the design process. They understood the problem. They shaped the solution. They adopted it.

Addressing Ethical and Governance Challenges in AI

Establishing Ethical AI Guidelines

AI governance isn't optional. It's a business imperative.

Only 8% of business leaders feel prepared for AI governance risks. Meanwhile, regulators are moving fast, the EU AI Act, the NIST AI Risk Management Framework, and emerging standards like ISO 42001 are creating compliance requirements that will only intensify.

But governance isn't just about avoiding regulatory trouble. It's about building trust with customers, employees, and partners.

Practical principles for ethical AI:

Fairness. AI systems should not perpetuate or amplify bias. This requires regular auditing and testing across different populations.

Transparency. Stakeholders should understand how AI systems make decisions, especially for high-stakes outcomes like lending, hiring, or healthcare.

Accountability. Someone must own AI decisions. When things go wrong, there needs to be a clear path to remediation.

Privacy. Data used to train and operate AI must be handled responsibly, with appropriate consent and protection.

These aren't abstract ideals. They're operational requirements. Build them into your AI development process from day one.

Developing Governance Frameworks

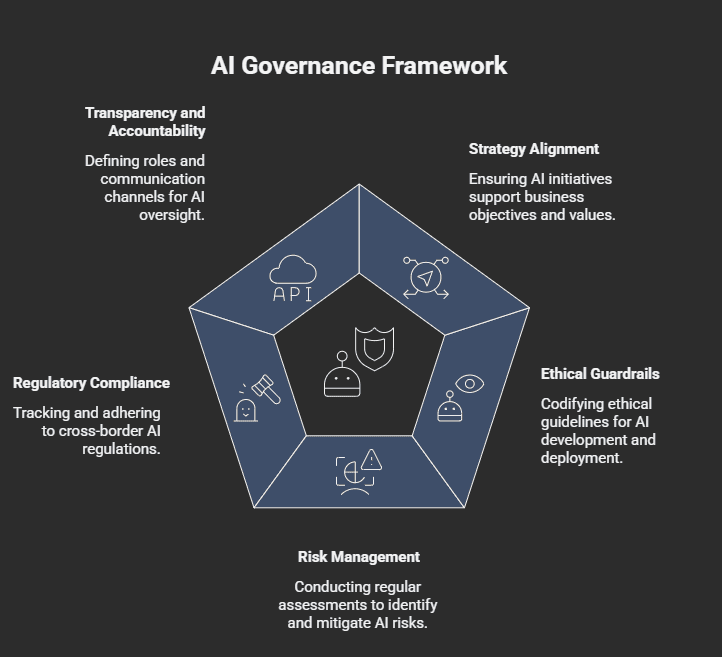

A practical AI governance framework has five pillars:

Strategy alignment: AI initiatives must support business objectives and reflect organizational values. Boards should approve a clear AI vision that balances innovation with risk thresholds.

Ethical guardrails: Codify ethical guidelines into policy covering bias detection, data usage, model transparency, and human oversight.

Risk management: Conduct regular AI risk assessments. Categorize risks across operational, reputational, cybersecurity, and compliance domains.

Regulatory compliance: Track cross-border requirements. Ensure documentation and audit trails meet emerging standards.

Transparency and accountability: Define clear roles and ownership. Establish escalation paths for incidents. Communicate openly with stakeholders about how AI is used.

Only 24% of organizations have enforced AI governance policies. Getting ahead of this is a competitive advantage, not just a compliance checkbox.

For a deeper dive on the partner role in governance, see what a transformation partner actually does and how partners differ from vendors.

Overcoming Resistance and Driving Employee Engagement

Engaging Employees in AI Initiatives

Resistance to AI is normal. People worry about job security, loss of autonomy, and being forced to learn new tools. The CEOs who succeed address this directly rather than hoping it will resolve itself.

The EY CEO Outlook Survey found something encouraging: CEOs' expectations of AI-driven job cuts have dropped significantly from 46% expecting headcount reductions in January 2025 to just 24% by year end. Leaders are realizing that AI works best when it augments human capability rather than replacing it.

Practical engagement strategies:

Involve employees in workflow redesign: The people doing the work understand where AI can help. Ask them. Incorporate their input. When employees shape AI solutions, they're invested in making them succeed.

Communicate the "why" clearly: People accept change when they understand the reason. Be specific about what AI will change, why it matters, and what it means for their roles.

Provide training that's role-specific: Generic AI training doesn't help. Show people how AI applies to their actual work. BCG's research found that only 36% of employees are satisfied with their AI training largely because it's too abstract.

Create feedback channels: Let employees report concerns, suggest improvements, and flag problems. Respond to that feedback visibly.

How to Handle Resistance to AI?

When resistance emerges, don't dismiss it. Investigate it.

Often, resistance signals a real problem, unclear expectations, inadequate training, poorly designed tools, or legitimate concerns about how AI affects job quality.

Address resistance by:

Listening first: What specifically are people worried about? Job loss? Increased surveillance? Being blamed for AI mistakes? Each concern requires a different response.

Demonstrating value early: Quick wins that make people's jobs easier build credibility for larger changes. If AI removes tedious work without adding new burdens, resistance drops.

Protecting psychological safety: People won't experiment with AI if they fear punishment for mistakes. Make it clear that learning curves are expected and supported.

Leading by example: If the leadership team uses AI visibly and talks about what they've learned, it normalizes adoption throughout the organization.

A workflow-first implementation approach can help you sequence AI adoption in ways that build momentum rather than resistance.

Measuring the Impact of AI Leadership

Key Performance Indicators (KPIs)

Measurement is where AI projects often fall apart. Teams track activity models deployed, tools purchased, pilots launched instead of outcomes. For a deeper framework on this, see our guide on the best ways to measure success of AI transformation.

Effective KPIs for AI transformation tie directly to business value:

Operational efficiency:

Time saved on specific tasks (hours per week, per employee)

Cycle time reduction for key workflows

Error rates before and after AI implementation

Financial impact:

Cost reduction in targeted processes

Revenue influenced by AI-enabled capabilities

ROI on AI investment (expect 2-4 year timelines for full realization)

To get a preliminary estimate of what AI could deliver for your business, try our AI ROI calculator.

Adoption and engagement:

Percentage of target users actively using AI tools

Employee satisfaction with AI-enabled workflows

Training completion and competency rates

Strategic outcomes:

Customer satisfaction improvements

Time to market for new products or services

Competitive positioning changes

Avoid vanity metrics. "We deployed 15 AI models" tells you nothing about value. "AI reduced customer response time by 60%" tells you something real.

Evaluating Leadership Effectiveness

AI leadership isn't a one-time exercise. It requires ongoing adaptation as technology evolves and organizational learning accumulates.

Quarterly, assess:

Are AI initiatives on track against business objectives? If not, why not? Is it execution, scope, or something more fundamental?

Is the organization building AI capability? Not just deploying tools, but developing the skills, infrastructure, and culture for sustained success.

Are governance frameworks keeping pace? Regulations and best practices are evolving fast. Are you staying ahead?

What have you learned? The best AI leaders treat every project successful or not as a source of organizational learning.

Build feedback loops into your AI strategy. Adjust based on what you learn. The organizations pulling ahead aren't the ones with the best initial plans, they're the ones that learn and adapt fastest.

Download our CEO AI Transformation Checklist

Conclusion

This isn’t about moving fast. It’s about moving with intent.

Most AI initiatives stall because there’s no clear strategy, no ownership, and no roadmap tying AI to real business outcomes.

Novoslo helps leadership teams fix that.

We work with CEOs to assess where AI can actually create value, define priorities, and build a practical path forward.

If you want clarity on what to do next and what to stop doing, Book a call with Novoslo.