Article

Feb 23, 2026

Which AI Workflow Automation Is Best for Writing?

Most companies are asking which AI writing automation tool should I use? The tool is rarely the problem. The workflow underneath it is.

A recent study published in Organizational Dynamics found that 74% of companies struggle to get value from their AI investments, and the cause is not the technology. It is the absence of any framework that connects AI to how people actually work. Writing is one of the first places this gap becomes visible because writing touches every department, every customer interaction, and every piece of content a business puts into the world.

If your team is producing content that feels generic, takes too long to review, or keeps missing the mark on brand voice, the answer probably is not a better AI writing tool. It is a better workflow around the tools you already have.

This post breaks down how to evaluate AI workflow automation for writing by looking at the problem in layers, not features. We will walk through a framework we use internally at Novoslo, compare the platforms that actually matter, and give you a decision model based on where your team sits today.

Why Most AI Writing Workflows Fail Before They Start

The most common mistake we see is what we call the "tool first" trap. A team picks an AI writing tool, maybe Jasper, maybe ChatGPT, maybe Claude, and expects the output to be production ready. When it is not, they blame the model.

But the model is usually doing exactly what it was asked to do. The problem is that nobody designed the steps around it.

Here is what the data says. AI writing tools are strong at grammar and sentence structure, with studies showing 93 to 96 percent accuracy on mechanical corrections. Where they fall apart is factual accuracy, which lands between 74 and 86 percent depending on the domain. That gap between "sounds right" and "is right" is where most enterprise content gets into trouble.

McKinsey's 2025 AI survey reinforces this pattern. Roughly half of organizations using AI reported at least one negative consequence, with nearly a third of those tied directly to inaccuracy. When you combine that with the broader finding that 70 to 85 percent of AI initiatives fail to meet their expected outcomes, it becomes clear that the bottleneck is not generation. It is everything that happens after generation.

Teams that treat AI as a drafting assistant and build nothing around quality control, brand alignment, or factual review will produce content that sounds confident while being wrong in ways they may not even notice. If you are implementing AI in your business for writing specifically, the first thing to get right is the process, not the tool.

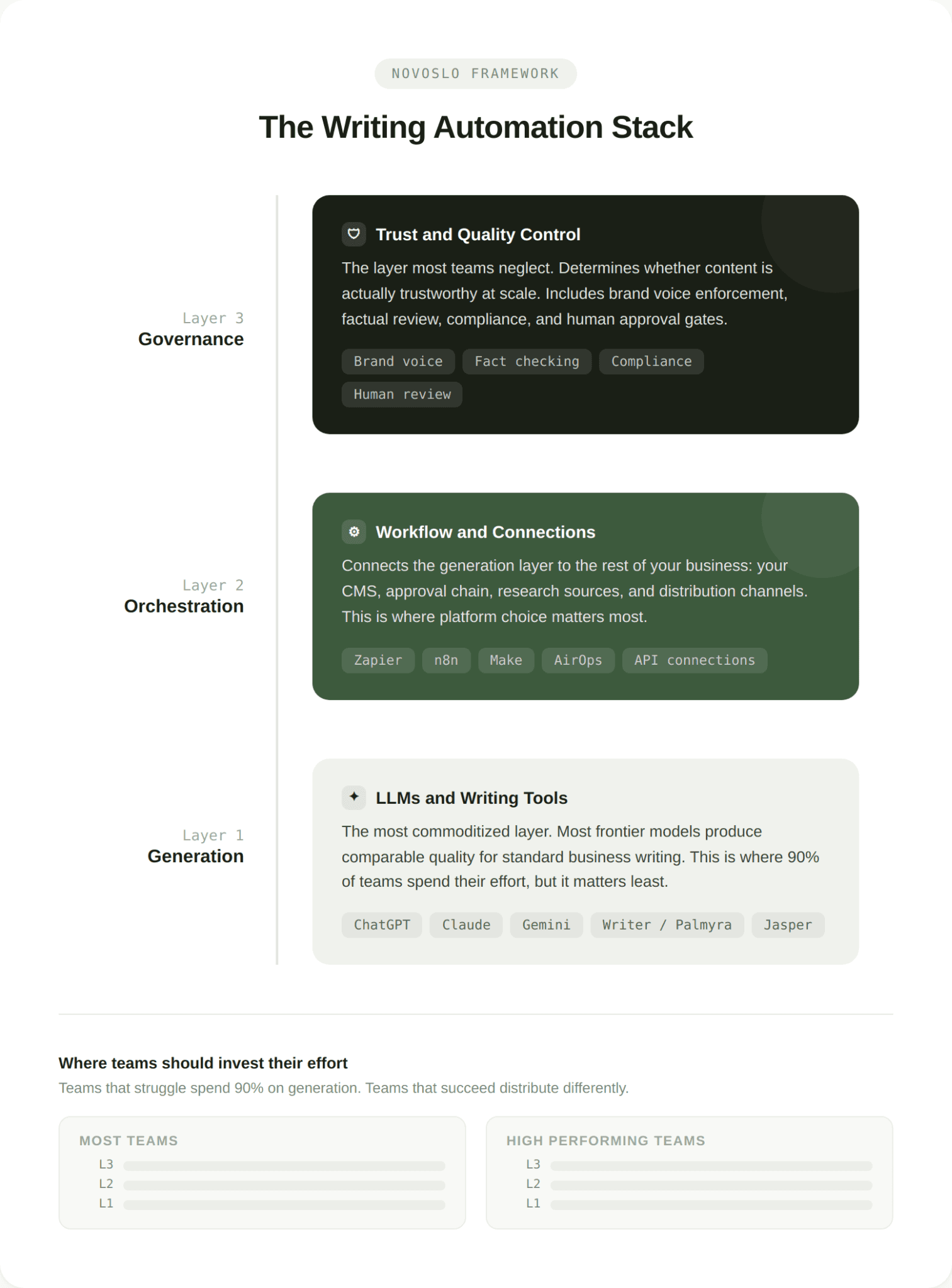

The Writing Automation Stack: Three Layers Most Teams Get Wrong

We use a framework internally called the Writing Automation Stack. It breaks AI writing automation into three layers, and the key insight is that most teams invest almost everything into the first layer while ignoring the other two.

Layer 1: The Generation Layer

This is the part everyone focuses on. It includes the LLMs and writing tools that produce the first draft, whether that is ChatGPT, Claude, Gemini, Writer's Palmyra models, or any other tool your team uses to generate text.

The generation layer is important, but it is the most commoditized part of the stack. Most frontier models produce comparable quality for standard business writing. The differences show up at the edges in tone control, long document coherence, and domain specificity, but for most use cases the model itself is not the deciding factor.

Layer 2: The Orchestration Layer

This is where the workflow platforms live. Tools like Zapier, n8n, Make, and AirOps sit in this layer. Their job is to connect the generation layer to the rest of your business, meaning your CMS, your approval chain, your research sources, your distribution channels.

Most teams either skip this layer entirely (doing everything manually between steps) or bolt it on as an afterthought. The result is a workflow that looks automated on paper but still requires someone to copy and paste between tools, manually trigger reviews, or remember to check facts before publishing.

If you have read our breakdown of AI agents versus AI tools, this is where that distinction matters most. A tool generates text. An agent can move that text through a pipeline, trigger a review, check it against your brand guidelines, and queue it for publishing, all without someone babysitting each step.

Layer 3: The Governance Layer

This is the layer almost everyone neglects, and it is the one that determines whether your content is actually trustworthy at scale. Governance includes brand voice enforcement, factual review, compliance checking, and human approval gates.

Enterprise writing platforms like Writer have built their entire product around this layer, using proprietary models trained on business writing data combined with guardrails that enforce style guides and regulatory requirements. But you do not need an enterprise platform to build governance into your workflow. You need clear rules about what gets checked, by whom, and at what stage.

The teams that get the most value from AI writing automation tend to spend about 20 percent of their effort on the generation layer, 40 percent on orchestration, and 40 percent on governance. The teams that struggle spend 90 percent on generation and wonder why the output still needs heavy editing.

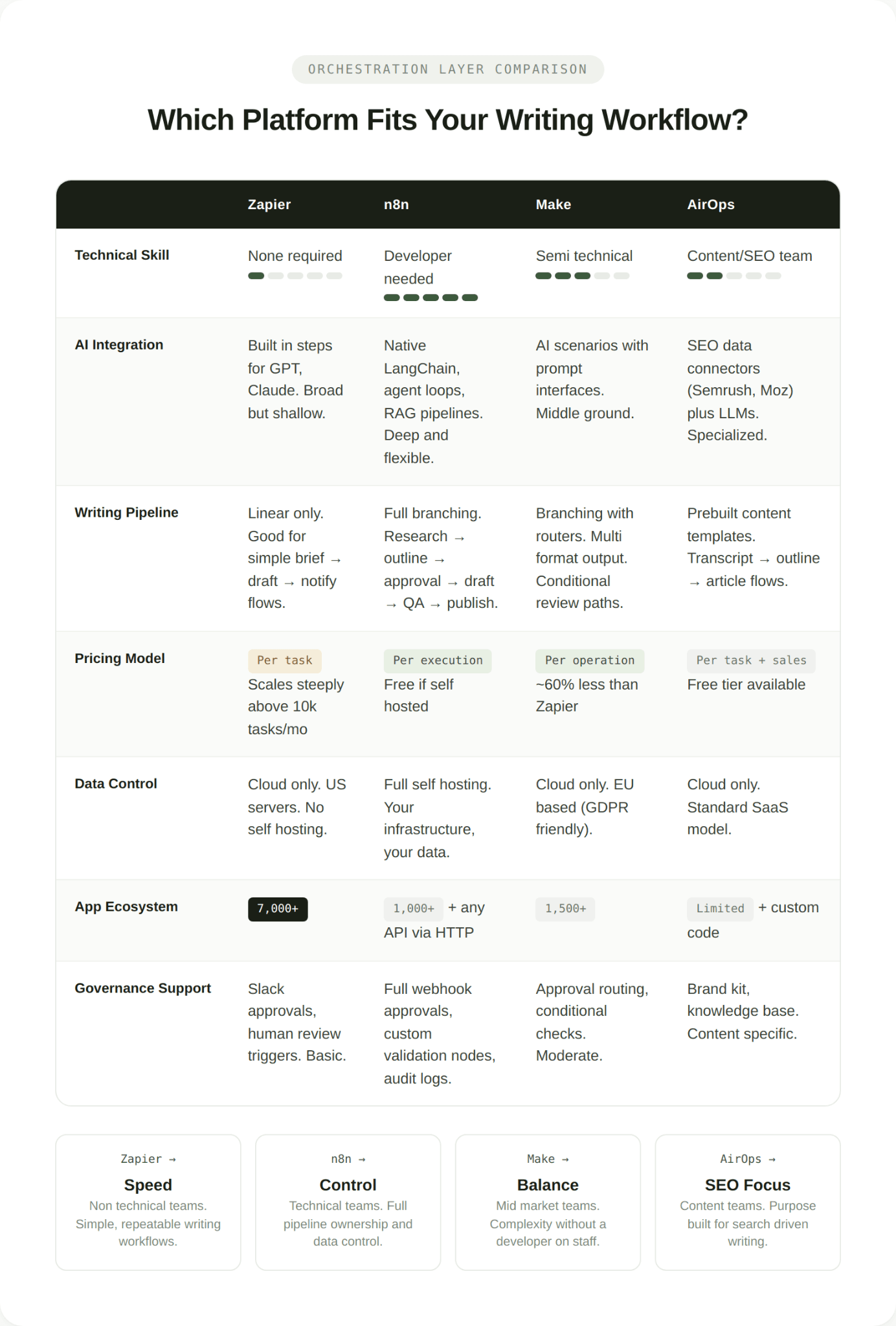

Which Orchestration Platform Actually Works for Writing?

If the generation layer is table stakes and the governance layer is about your internal rules, then the orchestration layer is where platform choice makes the biggest practical difference. Here is how the main options compare specifically for writing workflows.

Zapier

Zapier connects over 7,000 apps and requires no technical background to set up. For writing, it works well when the workflow is straightforward. For example, a trigger comes in from a form or a CRM update, Zapier sends a prompt to ChatGPT or Claude, the output gets saved to a Google Doc or pushed to a CMS, and a Slack notification tells someone to review it.

Where Zapier gets limited is in complex, branching writing workflows. If you need the AI to research a topic, draft based on that research, check the draft against brand guidelines, loop through a revision, and then format for multiple channels, Zapier's linear architecture starts to strain. Pricing also scales with task volume, which can get expensive for high frequency content operations.

Best fit: non technical teams that need simple, repeatable writing workflows across common business apps.

n8n

n8n is built for developers and technical operations teams. It is self hostable, open source (under a fair code license), and treats AI as a core workflow component rather than just another integration. It has native support for LangChain, agent loops, tool calling, memory management, and retrieval augmented generation pipelines, which means you can build writing workflows that maintain context across multiple steps, pull from internal knowledge bases, and make decisions based on the content they are processing.

For writing specifically, n8n lets you build pipelines where a single brief can trigger competitive research, generate an outline, get human approval via a webhook, produce a draft with citations, run it through a tone analysis node, and publish to your CMS. The trade off is setup complexity. You need someone comfortable with APIs and some JavaScript to get the most out of it.

Best fit: technical teams that want full control over their writing pipeline and need to connect custom or internal tools.

Make

Make (formerly Integromat) sits between Zapier and n8n in both power and complexity. Its visual scenario builder handles branching logic, routers, and iterators more naturally than Zapier does, which makes it better for writing workflows that involve multiple output formats or conditional paths. Pricing is also significantly lower than Zapier at equivalent volumes, roughly 60 percent less for comparable usage.

For writing teams, Make works well when you need a multi step workflow that a semi technical person can maintain. Think: intake a brief via Typeform, research with a web search API, draft with Claude, route the draft to different reviewers based on content type, and publish to WordPress.

Best fit: mid market teams that need more workflow complexity than Zapier allows without requiring a developer on staff.

AirOps

AirOps is the most specialized option on this list, built specifically for content marketers and SEO teams. It includes native integrations with tools like Semrush and Moz, connects to content management systems, and offers prebuilt workflow templates for common content tasks.

The strength is specialization. If your writing automation is primarily about producing search optimized content at scale, AirOps removes a lot of the setup work that a general purpose tool would require. The weakness is flexibility. It is not designed for workflows outside the content and SEO use case.

Best fit: content and SEO teams that want a purpose built solution for search driven writing.

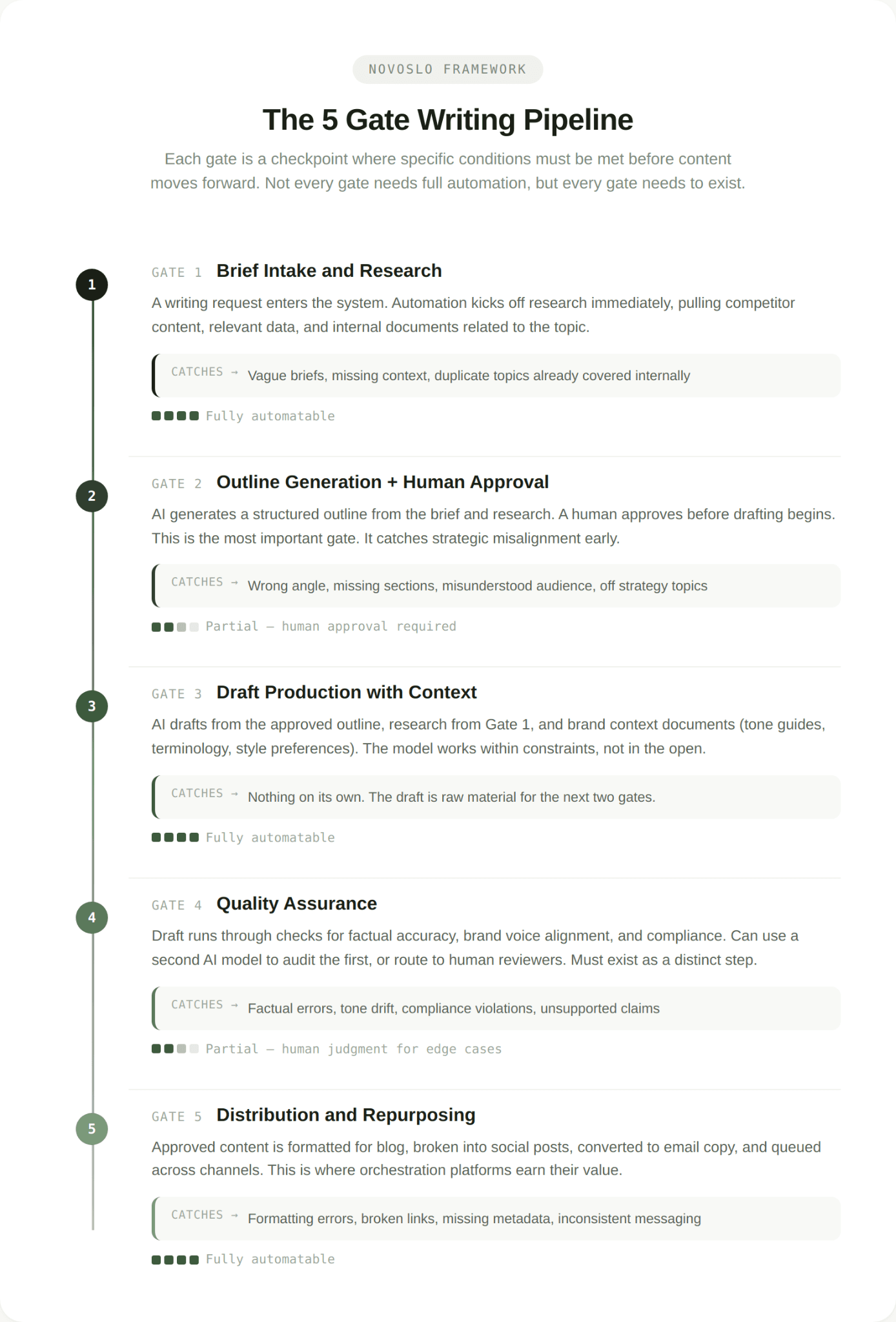

The 5 Gate Writing Pipeline: What a Complete AI Writing Workflow Looks Like

Knowing the tools is useful. Knowing how to connect them into a working system is what actually changes output quality. We use a model called the 5 Gate Writing Pipeline to design writing automation for our clients. Each gate represents a checkpoint where specific conditions must be met before the content moves forward.

Gate 1: Brief Intake and Research

The workflow starts when a writing request enters the system. This could be a form submission, a CRM trigger, a Slack command, or a manual entry. The automation immediately kicks off research, pulling competitor content, relevant data points, and any internal documents related to the topic.

What this gate catches: vague briefs, missing context, topics that have already been covered internally. The research step ensures the AI has real information to work with instead of relying entirely on its training data.

Gate 2: Outline Generation and Human Approval

The AI generates a structured outline based on the brief and research. This outline is sent to a human for approval before any drafting begins. This is the single most important gate in the pipeline, because it is where strategic misalignment gets caught early rather than discovered after 2,000 words have been written.

What this gate catches: wrong angle, missing sections, misunderstood audience, off strategy topics.

Gate 3: Draft Production with Context

Once the outline is approved, the AI drafts the content using the approved structure, the research from Gate 1, and any brand context documents you have loaded into the system (tone of voice guides, terminology lists, style preferences). This is where the generation layer does its work, but it is working within constraints rather than in the open.

What this gate catches: nothing on its own. The draft is not considered reviewed content at this stage. It is raw material that needs to pass through the next two gates.

Gate 4: Quality Assurance

This gate runs the draft through checks for factual accuracy, brand voice alignment, and compliance requirements. Depending on your setup, this can be partially automated (using a second AI model to audit the first model's output, running the text through your style guide, checking links and citations) or fully manual. The key is that it exists as a distinct step rather than being merged with editing.

One enterprise client we worked with doubled their sales team's efficiency by implementing structured AI review steps into their content workflow. The content itself was not dramatically different, but the consistency and speed of getting it to a publishable state improved significantly.

What this gate catches: factual errors, tone inconsistencies, compliance violations, claims without evidence.

Gate 5: Distribution and Repurposing

The final gate handles formatting, publishing, and repurposing. A single approved piece of content can be automatically reformatted for your blog, broken into social posts, converted into email copy, and queued for publishing across channels. This is where orchestration platforms earn their value, because doing this manually for every piece of content does not scale.

What this gate catches: formatting errors, broken links, missing metadata, inconsistent cross channel messaging.

The point of the pipeline is not that every gate needs to be fully automated. Some gates will always need a human, and that is fine. The point is that every gate exists, is defined, and has clear criteria for what passes through it. When we work with companies on AI workflow transformation, the 5 Gate model is usually the first thing we implement because it gives teams a shared language for where content is in the process and what needs to happen next.

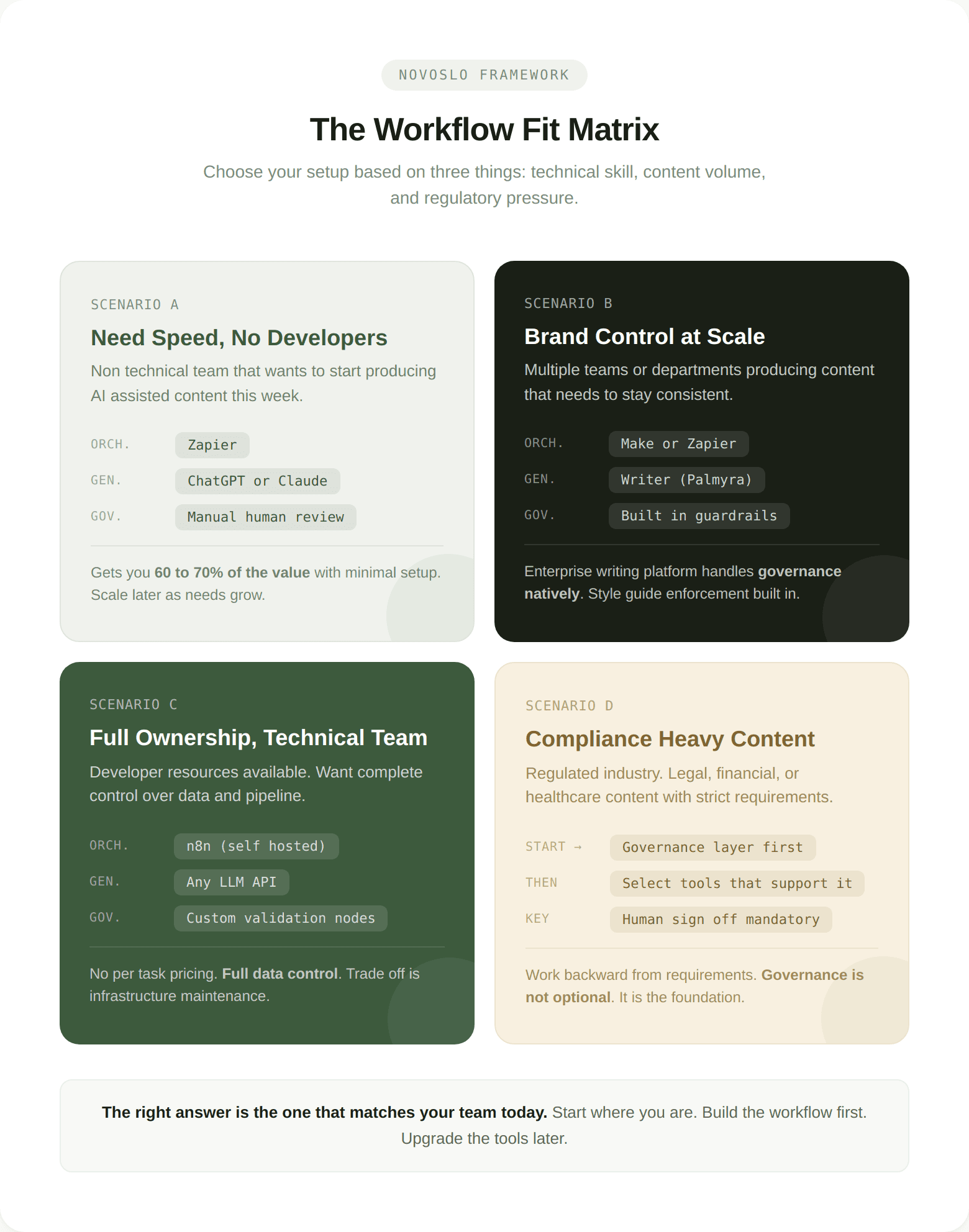

How Do You Choose the Right Setup for Your Team?

The answer depends on three things: your team's technical skill, the volume of content you produce, and how tightly regulated your industry is. Here is a decision framework we call the Workflow Fit Matrix.

If your team is non technical and needs speed

Start with Zapier connected to ChatGPT or Claude. Build a simple pipeline that handles intake, drafting, and notification. Add human review as a manual step in the middle. This gets you 60 to 70 percent of the value with minimal setup time. As your needs grow, you can add complexity later.

Use the Novoslo AI ROI calculator to estimate what this kind of basic automation would save your team in hours per week before committing to a platform.

If your team needs brand control at scale

Look at Writer or a similar enterprise writing platform as your generation and governance layer, combined with a workflow platform like Make or Zapier for orchestration. Writer's Palmyra models are trained specifically on business writing data and include built in guardrails for style enforcement and regulatory compliance. This matters if you are producing content across multiple teams or departments where consistency is a real concern.

If you are a mid market company evaluating this path, our guide on what an AI transformation partner actually does covers how to scope this kind of engagement without overcommitting.

If your team is technical and wants full ownership

n8n is the strongest option here. Self host it, connect it to whichever LLM APIs you prefer (OpenAI, Anthropic, open source models), and build the entire pipeline yourself. You get full data control, no per task pricing, and the ability to customize every step. The trade off is that someone on your team needs to own the infrastructure.

For teams going this route, understanding the real costs of AI implementation upfront will save you from underestimating what it takes to maintain a self hosted automation stack over time.

If your content is compliance heavy

Start with the governance layer and work backward. Define what needs to be checked, what requires human sign off, and what regulatory requirements apply to your content. Then select tools that support those requirements. In regulated industries like financial operations or healthcare, the governance layer is not optional. It is the foundation that everything else sits on.

You can also run a quick AI business audit to identify where your current writing workflow has the biggest gaps before you start building automation around it.

The Honest Answer

The best AI workflow automation for writing is the one that matches your team's actual process and fills the gaps you have today. If your problem is speed, fix orchestration. If your problem is quality, fix governance. If your problem is both, start with governance because fast, low quality content is worse than slow, good content.

Most teams do not need the most powerful tool. They need a complete workflow with clear gates, defined roles, and enough automation to remove the repetitive steps that slow everything down.

The three frameworks in this post, the Writing Automation Stack, the 5 Gate Writing Pipeline, and the Workflow Fit Matrix, are tools we use with clients to cut through the noise and build something that works. If you want help applying them to your specific situation, start a conversation with our team or explore our AI transformation roadmap for a broader view of how writing automation fits into your operations strategy.

What matters is not which tool you pick. What matters is that you build the system around it.