Article

Feb 18, 2026

How Can AI Streamline Operations for My Company?

Discover how AI can streamline operations, boost efficiency, and reduce costs across industries with innovative strategies

Almost every company is using AI in some capacity right now.

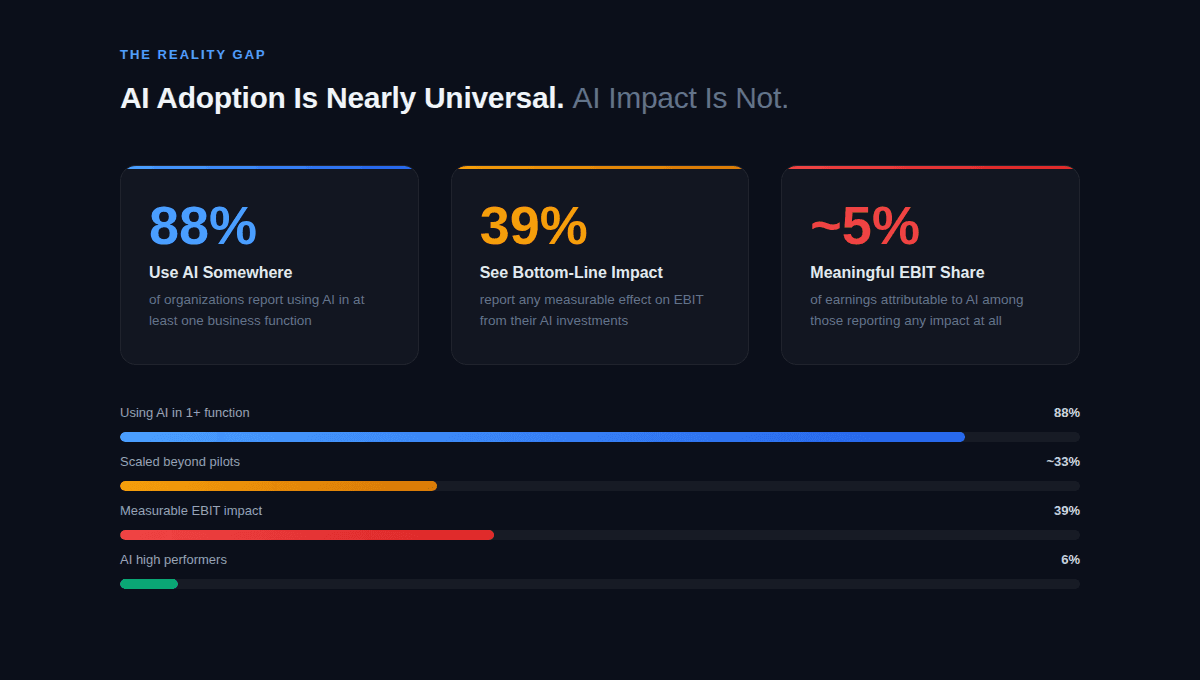

According to McKinsey's 2025 survey of nearly 2,000 organizations across 105 countries, 88% report using AI in at least one business function.

But only 39% of those organizations report any measurable effect on their bottom line from AI, and most of those say it accounts for less than 5% of their earnings. Nearly two-thirds are still running pilots or experiments without scaling anything into real workflows.

The gap between "we use AI" and "AI changed how we operate" is wide, and it's where most companies are sitting right now. This article is for operations leaders who want to close that gap, we cover:

Where AI actually delivers in business operations

Why most projects stall before producing value, and

How to structure an implementation that survives contact with real workflows.

The goal is practical clarity, because the companies getting results from AI are not the ones with the biggest budgets or the most advanced technology.

What AI in Business Operations Actually Means in 2026

Beyond Chatbots: What Operational AI Looks Like Now

When most people hear "AI in business," they think of chatbots or text generators. That's understandable, because those were the most visible early applications.

But in operations, the meaningful work is happening further from the surface:

Systems that process documents

Route approvals

Flag anomalies in financial data

Predict equipment maintenance windows

Coordinate logistics across multiple facilities.

Deloitte's 2026 enterprise AI report found that two-thirds of organizations using AI report gains in productivity and efficiency. But the report also found that only 20% have seen revenue growth from AI so far, while 74% still consider revenue growth an aspiration rather than a current result.

The pattern is consistent: AI is delivering value in specific operational functions, particularly back-office work, well before it shows up on the income statement as a line item.

The companies seeing early results tend to focus AI on tasks that are high-volume, rules-based, and repetitive, such as:

Invoice processing

Data reconciliation

Report generation

Compliance checks

These aren't glamorous use cases, but they're the ones that produce measurable time savings and error reduction within weeks of deployment, not months.

Why "AI Adoption" and "AI Results" Are Two Different Things

There is a real and persistent disconnect between the number of companies using AI and the number of companies getting material results from it. McKinsey's data shows that only about one-third of organizations have scaled AI across their enterprise, despite nearly all of them running some form of AI project. The majority are stuck in what researchers consistently describe as pilot purgatory: running proof-of-concept projects that never integrate into actual business processes.

The distinction matters because buying a tool and deploying a tool are different activities. A company that gives its team access to a large language model has adopted AI. A company that has rebuilt its contract review process so that AI handles first-pass extraction and humans handle exceptions has integrated AI. The second company is the one seeing ROI. Most organizations are closer to the first.

Where AI Delivers the Most Value in Operations

Why Automating Repetitive Back-Office Work is the Key

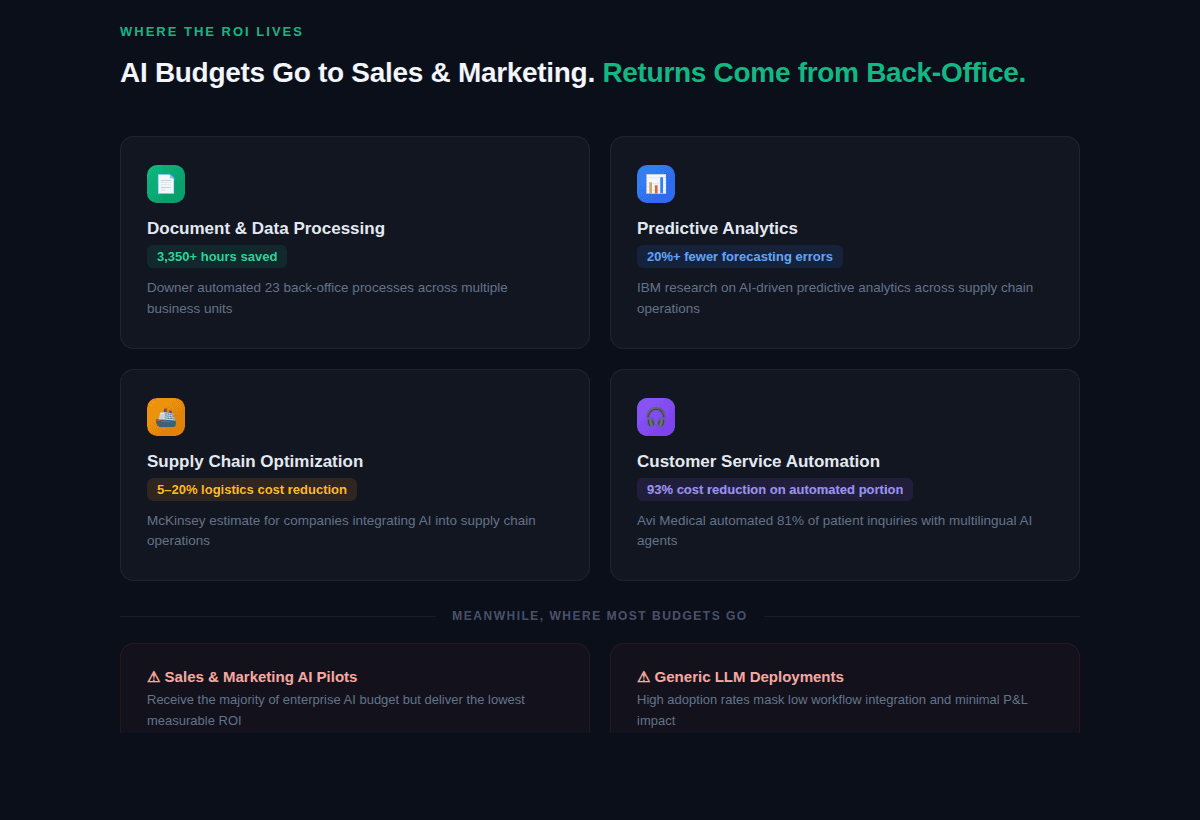

MIT's State of AI in Business report found that while most enterprise AI budgets go toward sales and marketing tools, the highest return on investment comes from back-office automation. That includes eliminating manual data entry, reducing reliance on business process outsourcing, cutting agency costs, and consolidating workflows that currently require multiple people to touch the same document or dataset.

A construction company called Downer, for example, automated 23 processes using a workflow automation platform, saving over 3,350 development hours across multiple business units. An educational institution, Abingdon & Witney College, digitized processes like trip approvals, accident reporting, and expense claims, saving over 1,665 hours on a single workflow. These are not theoretical projections. They are logged operational hours returned to the business.

The pattern across real-world AI transformation examples is consistent: the first place to look for AI value is wherever your team spends the most time on work that doesn't require judgment. If someone is copying data between systems, formatting reports, or reconciling invoices manually, that's a workflow AI can likely handle with high accuracy and significant time savings.

Predictive Analytics for Planning and Decision-Making

Predictive analytics is one of the areas where AI has moved from experimental to operational at meaningful scale. IBM research indicates that companies using AI-driven predictive analytics have reduced forecasting errors by at least 20%, which directly affects inventory costs, production planning, and resource allocation.

What makes predictive analytics useful for operations teams is that it replaces intuition-based planning with data-driven planning without requiring the operations team to become data scientists. Modern AI tools can process historical trends, real-time inputs from sales and logistics systems, and external signals like weather patterns or market shifts to produce forecasts that are continuously updated. The result is fewer stockouts, less overproduction, and tighter alignment between capacity and demand.

One enterprise client we worked with doubled their sales efficiency by integrating AI-driven insights into their lead engagement process. The AI identified optimal timing and ranked leads by account-fit and intent signals, which meant their team spent less time on low-probability outreach and more time on conversations that were likely to close.

Supply Chain and Inventory Management

Supply chain management is undergoing what analysts at Supply Chain Management Review describe as a shift from reactive to predictive operations. The historical model treated procurement, manufacturing, and logistics as separate data environments. AI-based control towers now integrate those silos and ingest external signals port congestion data, weather patterns, demand signals to forecast disruptions before they arrive.

McKinsey's research on supply chain AI estimates that integrating AI into logistics operations can reduce costs by 5 to 20%. At DP World's Jebel Ali Free Zone, AI-powered predictive analytics within their terminal operating system eliminated 350,000 unnecessary container moves annually while improving truck servicing times by 20%. These are operational improvements that compound across every shipment and every quarter.

For mid-market companies that don't operate container terminals, the relevant version of this is AI-powered demand forecasting applied to your existing ERP or inventory management system. The technology is mature enough that the main barrier is data quality and integration, not the AI itself.

Customer Service and Support Operations

AI in customer service has matured past the frustrating chatbot era. Current systems can handle complex queries, personalize responses using customer history, and escalate to human agents when the situation requires it. The operational benefit is not just cost reduction but capacity AI handles the volume of routine interactions so that your support team can spend their time on problems that require human judgment and relationship-building.

The healthcare company Avi Medical deployed multilingual AI agents to handle routine patient inquiries. The system automated 81% of common interactions while keeping complex cases routed to humans, resulting in a 93% reduction in costs and an 87% improvement in response times for the automated portion. That's a pattern we see across industries: AI works well when there's a clear boundary between what it handles and what gets escalated.

Why Do Most AI Projects Fail to Deliver ROI?

The Pilot Purgatory Problem

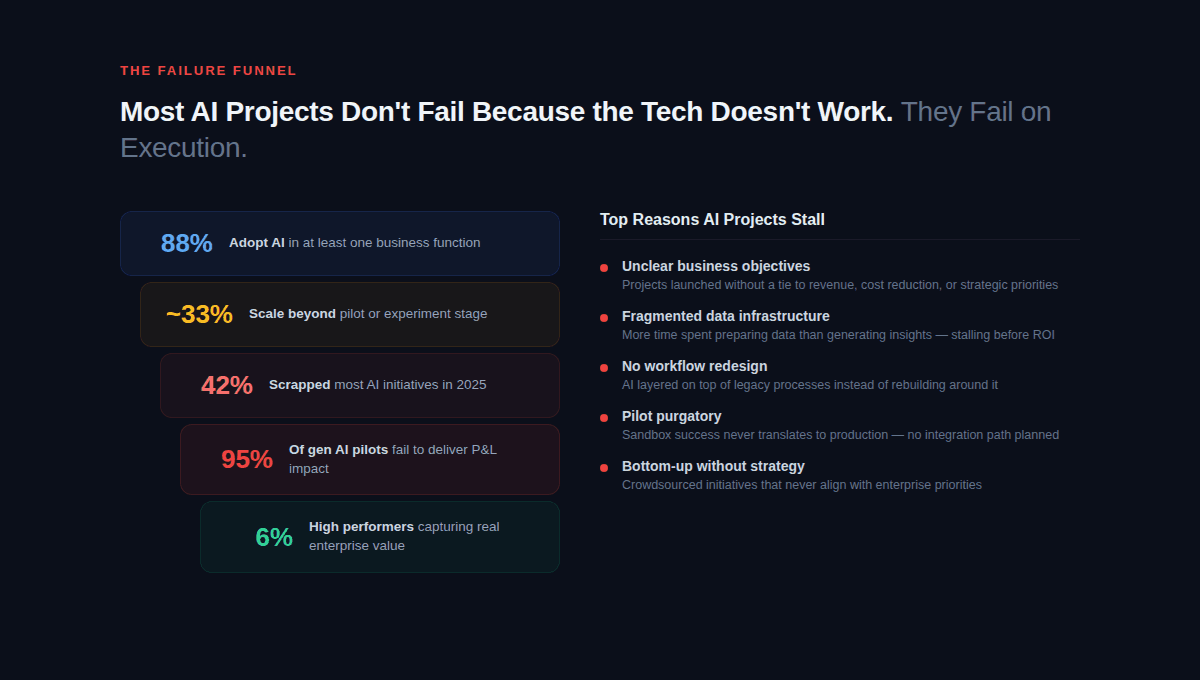

The failure rate in enterprise AI is not subtle. MIT's Project NANDA research, based on 300+ public AI deployments and 150 interviews with organizational leaders, found that roughly 95% of generative AI pilot programs fail to deliver measurable impact on the P&L. Separately, S&P Global reported that 42% of companies scrapped most of their AI initiatives in 2025, up from 17% the year before.

The consistent finding across every major study is that AI projects don't fail because the technology doesn't work. They fail because of unclear objectives, fragmented data, workflows that were never redesigned, and organizational resistance to changing established processes. Most pilots are launched in a sandbox, tested with sample data, declared a success in a demo, and then stall permanently when someone asks how to connect them to the actual systems the business runs on.

Understanding why AI implementation projects fail and what they really cost is important before committing budget, because the cost of a stalled project isn't just the software license. It's the time your team spent on it, the political capital that was burned, and the organizational skepticism that makes the next AI initiative harder to greenlight.

Workflow-First vs. Tool-First Thinking

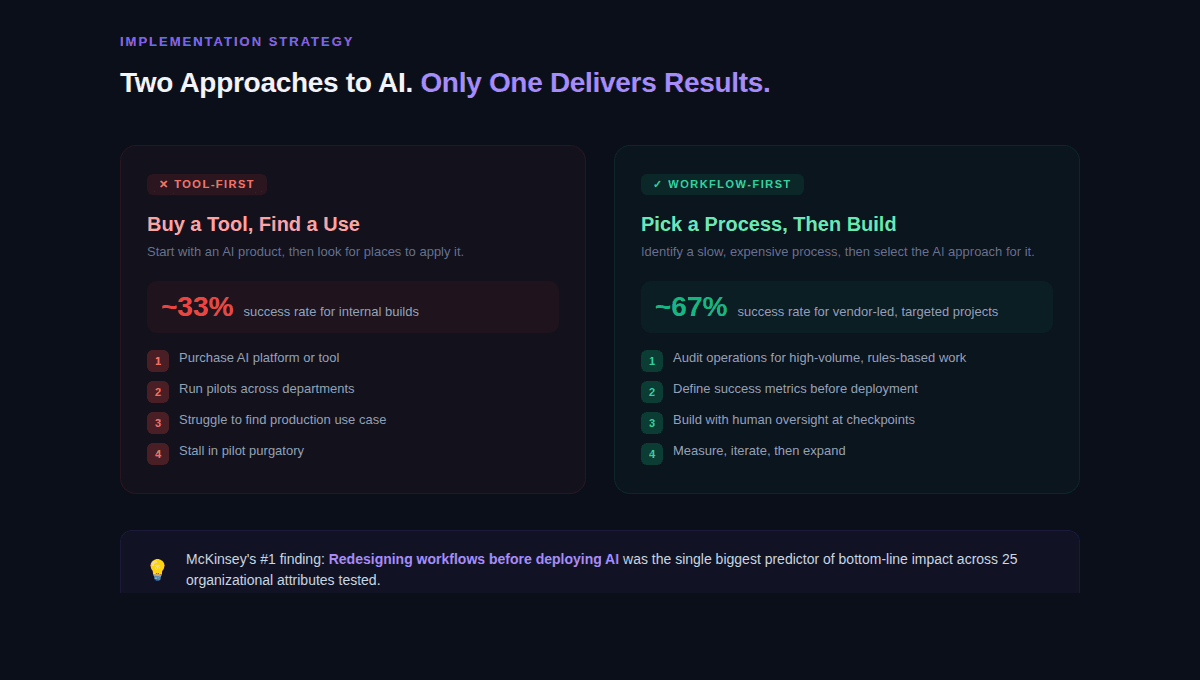

The MIT data reveals an important pattern: specialized vendor-led AI projects succeed approximately 67% of the time, while internal builds succeed only about 33% of the time. PwC's 2026 AI predictions reinforce this, arguing that companies adopting a top-down enterprise strategy where leadership identifies specific workflows for AI investment consistently outperform companies that crowdsource AI projects from the bottom up.

The difference comes down to whether you start with a workflow or start with a tool. Starting with a tool means you bought an AI product and now you're looking for places to use it. Starting with a workflow means you identified a specific process that's slow, expensive, or error-prone, and then you selected the AI approach that addresses that specific problem.

This is the same distinction that separates AI agents from AI tools. Tools give your team a new capability. Agents automate a workflow. If you're trying to reduce the number of hours your team spends on a process, you need the second approach. McKinsey's 2025 data found that out of 25 organizational attributes tested, the single biggest predictor of AI generating bottom-line impact was whether the company had redesigned workflows before deploying the technology.

How Should You Implement AI in Your Company?

Start With One Workflow, Not One Tool

The companies that get real results from AI share a common starting point: they pick one specific, well-understood process and automate it thoroughly before expanding. PwC's research describes the most successful AI adopters as companies where senior leadership identifies a few key workflows where the payoff can be significant, rather than running dozens of disconnected experiments.

A practical starting point is to audit your operations for processes that are high-volume, rules-based, and currently spread across multiple systems or manual steps. Invoice processing, employee onboarding, compliance documentation, and report generation are common first targets because they're well-defined enough to automate without ambiguity and frequent enough to produce measurable savings quickly.

If you haven't mapped your workflows yet, Novoslo's AI business audit tool can help you identify which processes in your organization are the strongest candidates for AI automation, along with projected ROI for each.

Build for Oversight, Not Full Automation

McKinsey's data shows that high-performing AI organizations are significantly more likely than their peers to have defined processes for determining when model outputs require human validation. This is not a weakness in the technology. It's a design principle. The systems that work in production are human-in-the-loop systems, where AI handles the volume and pattern-matching while humans handle exceptions, judgment calls, and final approvals.

This matters because the fear around AI implementation in most organizations is not about cost or complexity. It's about trust. When teams know that AI outputs are checked before they reach a client, a regulator, or a financial system, adoption rates increase. When AI is positioned as a draft-and-review tool rather than a fully autonomous agent, the resistance from experienced staff drops substantially, because they remain accountable for the final output.

For a deeper walkthrough of how to structure this, our guide on how to implement AI in your business covers the workflow-first framework in detail, including how to avoid the most common integration pitfalls.

Measure What Matters, Not Just Adoption

One of the recurring problems in enterprise AI is that companies measure adoption rates instead of business outcomes. The number of employees who logged into an AI tool last month is not a useful metric. The number of hours saved on invoice processing, the reduction in compliance review time, or the change in error rates on data entry those are useful metrics.

McKinsey's research found that tracking well-defined KPIs for AI solutions is one of the practices that most strongly correlates with capturing real business value. This means defining your success criteria before deployment, not after. What does this workflow cost today in hours and dollars? What should it cost after AI is integrated? What's the acceptable error rate? These are the questions that keep an AI project accountable.

For a structured approach to this, our framework for measuring AI transformation success provides a practical model for tracking the metrics that actually indicate whether your investment is working.

What About the Workforce?

The Real Shift: Tasks Change Before Jobs Do

A CNBC survey of senior HR leaders found that 89% expect AI to reshape jobs in 2026, and a London School of Economics study found that employees using AI for work tasks save an average of 7.5 hours per week. Those two data points capture the current reality: AI is already changing the content of people's work, even where it hasn't changed the headcount.

The World Economic Forum's Future of Jobs Report estimates that AI and information processing will affect 86% of businesses by 2030, but also projects that AI will create more jobs than it displaces if companies invest deliberately in reskilling and redesign work rather than just layering the technology onto existing structures. The companies that handle this transition well are the ones that redesign roles around what humans do best (judgment, relationships, creative problem-solving) while automating the repetitive components of those roles.

PwC's AI Jobs Barometer found that workers with AI skills command wage premiums of up to 56% over their peers, and that job numbers are actually rising even in highly automatable roles. The shift is not about elimination. It's about redistribution. Roles are changing in composition, and the organizations that recognize this early are building teams that are more productive per person rather than simply smaller.

Why Training Is a Business Function, Not an HR Afterthought

Deloitte's 2026 AI report identified the AI skills gap as the single biggest barrier to further AI integration, and found that training the workforce was the number-one way companies adjusted their talent strategies in response to AI. But there's a disconnect: while 53% of organizations are focused on educating employees, far fewer are actually redesigning roles, workflows, or career paths around AI capabilities.

Workers report saving significant time using AI tools, but only 25% receive formal AI training from their employers, according to The Adecco Group's 2025 workforce report. That creates a gap where employees are experimenting on their own what researchers call "shadow AI" without guidance on how to apply those tools to work that matters. MIT's research found that over 90% of companies have employees using personal AI tools for work, often with better outcomes than official enterprise deployments.

The practical implication is that training should not be a one-time onboarding event. It should be structured around specific workflows, tied to measurable outcomes, and treated as an ongoing business investment with the same rigor applied to any other operational initiative.

What Does the Future of AI in Operations Look Like?

Agentic AI and Multi-Step Automation

The next stage of operational AI is agentic systems AI that can plan and execute multi-step workflows rather than just responding to single prompts. McKinsey's 2025 survey found that 23% of organizations are already scaling agentic AI in at least one business function, and another 39% are experimenting with it. PwC predicts that 2026 is the year agentic AI moves from proof-of-concept to production, supported by centralized platforms with shared libraries of agents, templates, and oversight tools.

In practice, this means AI systems that can handle a sequence of tasks: receive a document, extract relevant data, cross-reference it against a database, flag exceptions, draft a response, and route it for human review. Each step in that chain currently requires either a person or a separate tool. Agentic AI consolidates those steps into a single workflow with human oversight at defined checkpoints.

For operations leaders, the relevant question is not whether agentic AI works it does, in defined domains but whether your data and processes are structured well enough to support it. Companies that invest now in clean data, documented workflows, and clear decision criteria will be positioned to adopt agentic systems as they mature. Companies that don't will be running the same catch-up cycle they ran with basic AI automation.

The Gap Between Early Movers and Everyone Else

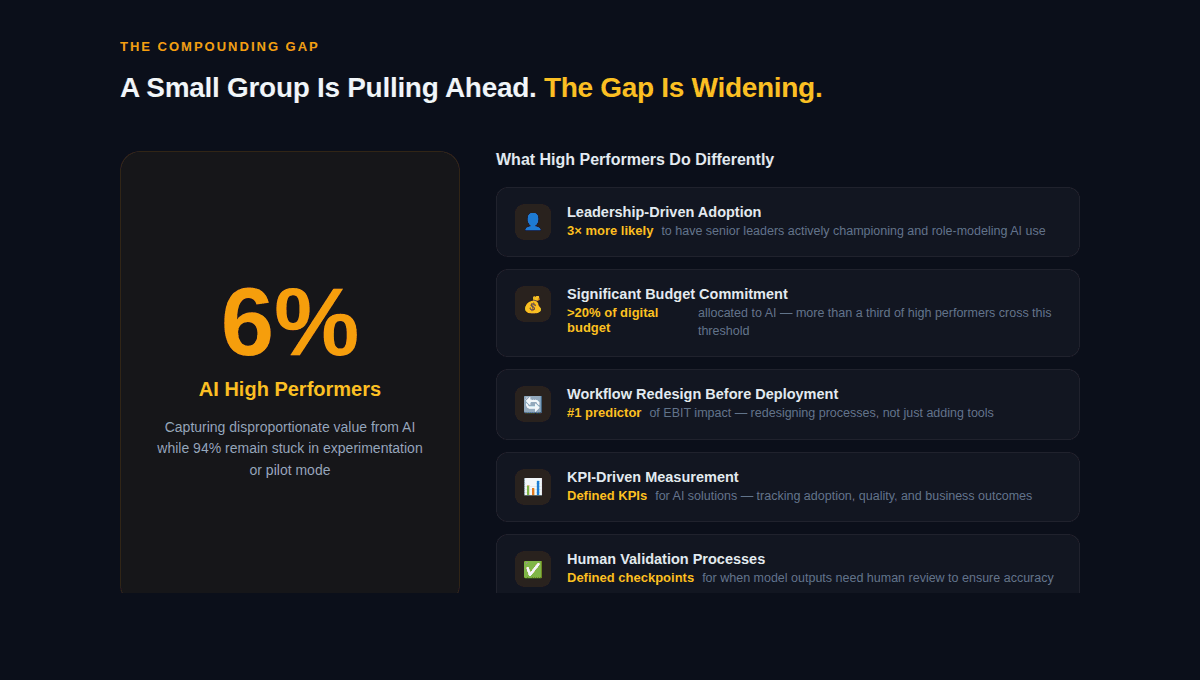

McKinsey's research identified a small group of AI high performers about 6% of respondents that are capturing disproportionate value from AI. These organizations are three times more likely to have senior leadership actively championing AI adoption, more than a third allocate over 20% of their digital budgets to AI, and about three-quarters have already scaled AI across the business. They are pulling ahead, and the gap is compounding.

The compounding part matters. Organizations that integrate AI into their operations now are building institutional knowledge, refining their data infrastructure, and training their teams — all of which make the next AI project easier and faster to deploy. Organizations that wait are not standing still. They are falling further behind in capability, cost structure, and speed of execution.

Where to Start with Streamlining AI Operations for Your Company?

The question "how can AI streamline operations for my company" has a specific answer, but it depends on your company. The technology is mature, the use cases are proven, and the data on what works and what doesn't is abundant. The limiting factor is execution.

Three things separate the companies getting results from the ones stuck in pilot mode. First, they start with a specific workflow, not a technology purchase. Second, they build for human oversight from day one, which earns trust and accelerates adoption. Third, they measure business outcomes hours saved, errors reduced, costs cut rather than adoption metrics.

If you're an operations leader looking to move from interest to implementation, the practical next step is to identify one high-volume, rules-based process in your business and scope what AI-assisted automation would look like for that specific workflow. That's how most success stories start not with a company-wide AI strategy, but with one process that works better than it did last month.

If you want a structured approach to identifying those opportunities, you can explore what an AI transformation partner actually does or use our AI ROI calculator to estimate the financial impact of automating your most time-intensive processes.