Article

Mar 2, 2026

Why 70% of AI Transformations Fail (And How to Avoid It)

AI transformations fail strategically, not technically. Learn the 5 real reasons and the A.I.R.E. framework to beat the 70% failure rate.

Depending on which report you read this year, somewhere between 70% and 95% of AI projects fail. MIT says 95%. RAND Corporation says 80%. Gartner, McKinsey, and BCG all land somewhere in between. The numbers are real, but they're also misleading — because they lump together very different kinds of failure under a single statistic.

Some projects fail because the technology doesn't work. That happens, but it's rare. Most AI projects fail because the organization around them wasn't ready. The strategy was unclear, the workflows stayed the same, and nobody measured what mattered before the project started. Understanding the difference between a technical failure and a strategic one is the first step toward making sure your AI investment actually pays off.

Why the 70–90% Failure Stats Are Misleading

The headline numbers tend to come from broad surveys that define failure loosely. A project that delivered value in one department but didn't scale enterprise-wide gets counted as a failure. A proof-of-concept that was intentionally exploratory and got shut down after learning what it needed to learn gets counted as a failure. A pilot that worked technically but didn't have executive support to move into production — also a failure.

S&P Global's 2025 survey found that 42% of companies abandoned most of their AI initiatives that year, up from just 17% the year before. The average organization scrapped 46% of its proof-of-concepts before they reached production. Those are significant numbers. But the reasons behind them matter more than the numbers themselves.

RAND Corporation found that AI projects fail at roughly twice the rate of other IT projects. That gap doesn't come from the models being worse. It comes from AI requiring a deeper level of organizational readiness — cleaner data, redesigned processes, clearer ownership — that most companies haven't built yet.

What Failure Actually Means in Practice

When we work with organizations that have "failed" at AI, the pattern is almost always the same. The technology performed fine in a controlled setting. The demo impressed the leadership team. Then the project stalled because it was never connected to an actual business outcome, or because the workflow it was supposed to improve was already broken, or because nobody with budget authority owned the initiative.

This is what we mean when we say most AI projects fail strategically, not technically. The model works. The infrastructure around it doesn't.

The 5 Real Reasons AI Transformations Fail

Most articles about AI failure cite poor data quality, lack of talent, and resistance to change. Those are real factors, but they're symptoms. The root causes run deeper.

1. No Economic Baseline

This is the most common and least discussed reason AI projects fail. The organization starts an AI initiative without measuring what things cost before AI enters the picture. How long does the current process take? How many people touch it? What does each error cost? What's the throughput?

Without that baseline, there's no way to calculate ROI after deployment. And without ROI, there's no internal case to scale the project — even if it works. The initiative quietly dies, and the 70% failure stat gets another data point.

If you're planning an AI transformation, the single most important thing you can do in the first month is measure what you're starting from. Not in general terms. In dollars, hours, and error rates.

2. Tool-First Strategy

A surprising number of organizations start their AI journey by purchasing software. They attend a demo, get excited about the possibilities, and buy a platform before they've clearly identified which bottlenecks they're trying to solve.

This leads to a predictable outcome: the tool does something impressive in isolation, but it doesn't connect to the actual problem. The team ends up buying the wrong AI solution entirely — not because the product is bad, but because the selection happened before the diagnosis.

The organizations that get this right work in reverse. They start with the operational problem, map the process, identify where time and money are lost, and then select a tool that addresses that specific gap.

3. No Process Redesign

McKinsey's 2025 State of AI survey tested 25 attributes across nearly 2,000 organizations and found that workflow redesign had the single strongest correlation with EBIT impact from AI. High-performing organizations — the roughly 6% that reported meaningful financial returns — were nearly three times more likely to have fundamentally redesigned their workflows around AI rather than layering AI onto existing processes.

BCG's research supports the same finding: companies that merely deploy AI tools without changing how work gets done see minimal returns, while those that reshape workflows end-to-end save significantly more time and shift employees toward higher-value tasks.

This is one of the most underappreciated dynamics in AI implementation. If you take a broken, manual, approval-heavy workflow and add AI on top of it, you get a slightly faster broken workflow. The bottleneck doesn't disappear — it moves. A workflow-first implementation guide can help teams sequence this correctly, starting with process redesign before tool selection.

4. No Executive Owner

AI projects that live between IT and operations tend to die there. IT sees AI as a technical deployment. Operations sees it as someone else's initiative. Without a single business leader who owns the outcome — not the technology, the outcome — the project loses momentum the moment it encounters friction.

McKinsey's data reinforces this: organizations where senior leaders actively champion AI adoption and model its use perform significantly better than those that delegate AI to a technical team. This isn't about having a Chief AI Officer title on the org chart. It's about having one person who is accountable for whether the AI investment produces a measurable business result.

5. Pilot Paralysis

IDC research found that for every 33 AI proof-of-concepts a company launches, only four make it into production. Gartner predicted that at least 30% of generative AI projects would be abandoned after proof-of-concept by the end of 2025, citing escalating costs, unclear business value, and poor data quality.

The pattern is consistent across industries: organizations launch pilots in low-risk environments, get promising results, and then never build the path from pilot to production. There's no integration plan, no change management process, no production infrastructure. The pilot runs indefinitely in a sandbox while the team moves on to the next experiment.

MIT's NANDA Initiative studied over 300 public AI deployments and found that 95% of enterprise AI pilots failed to progress to scaled adoption. The research identified a key gap: most enterprise AI tools can't retain memory, adapt to feedback, or integrate into actual workflows. They work in isolation, but they don't learn alongside the organization. That gap between "it works in a demo" and "it works in production" is where most AI investments go to die.

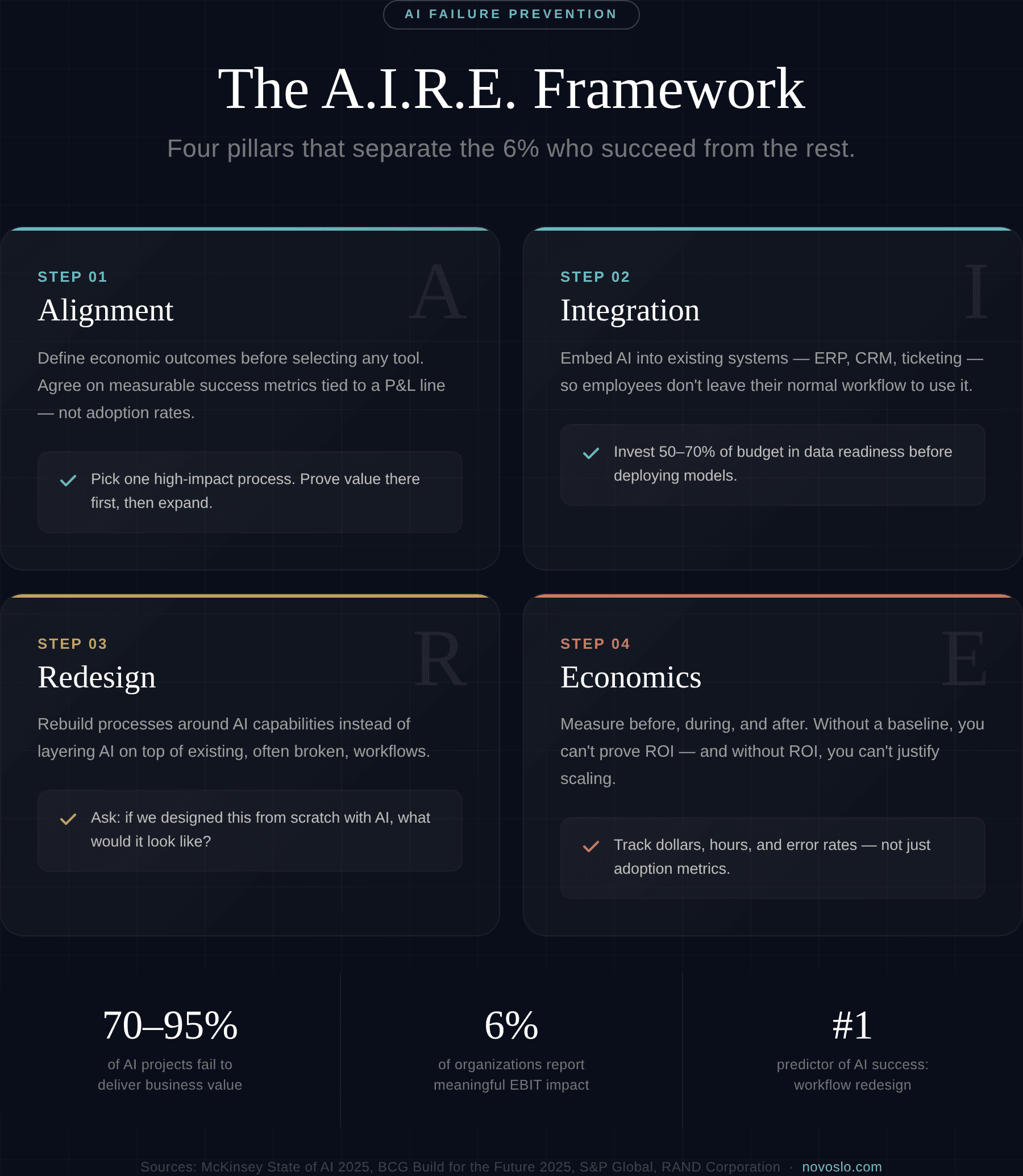

The A.I.R.E. Framework: How to Prevent AI Failure

Based on the patterns we see across organizations that succeed and those that don't, we've developed a simple framework for evaluating and structuring AI initiatives. We call it A.I.R.E.

Alignment — Define Economic Outcomes Before Selecting Tools

Before any tool is purchased or any model is tested, the organization needs agreement on what success looks like in business terms. That means specific metrics: cost per transaction, processing time, error rate, throughput per employee. These should be measurable, tied to a P&L line, and agreed upon by the business owner, not just the technical team.

Alignment also means agreeing on scope. The organizations that succeed tend to pick one high-impact process, prove value there, and then expand. The ones that fail try to transform everything at once.

Integration — Embed AI Into Existing Systems and Workflows

AI that operates in a standalone tool or dashboard rarely survives. It needs to live inside the systems people already use — the ERP, the CRM, the ticketing system, the communication platform. If employees have to leave their normal workflow to interact with AI, adoption drops and the project stalls.

Integration also means data. The AI needs access to clean, governed, real-time data from the systems it's supposed to improve. Informatica's 2025 research found that data quality and readiness were cited as the top obstacle to AI success by 43% of organizations. Winning programs invest 50–70% of their timeline and budget in data preparation before they deploy a single model.

Redesign — Rebuild Processes Around AI, Not the Other Way Around

This is where most organizations get stuck. They take their existing eight-step, four-approval, three-handoff process and try to accelerate it with AI. The better approach is to ask: if we were designing this process from scratch with AI as a core capability, what would it look like?

That question usually leads to fewer steps, fewer handoffs, different roles, and different decision points. It's harder than bolting AI onto what exists, but it's the only approach that consistently produces meaningful financial returns.

Economics — Measure Before, During, and After

Measurement is the thread that holds the entire framework together. Without a pre-deployment baseline, you can't prove value. Without ongoing measurement, you can't optimize. Without post-deployment analysis, you can't justify expansion.

The economics layer should track both direct metrics (cost savings, time reduction, error reduction) and indirect metrics (employee satisfaction, customer experience, capacity freed for higher-value work). Understanding your total AI implementation costs upfront prevents the budget surprises that kill projects midstream.

What Do Winning Companies Actually Do Differently?

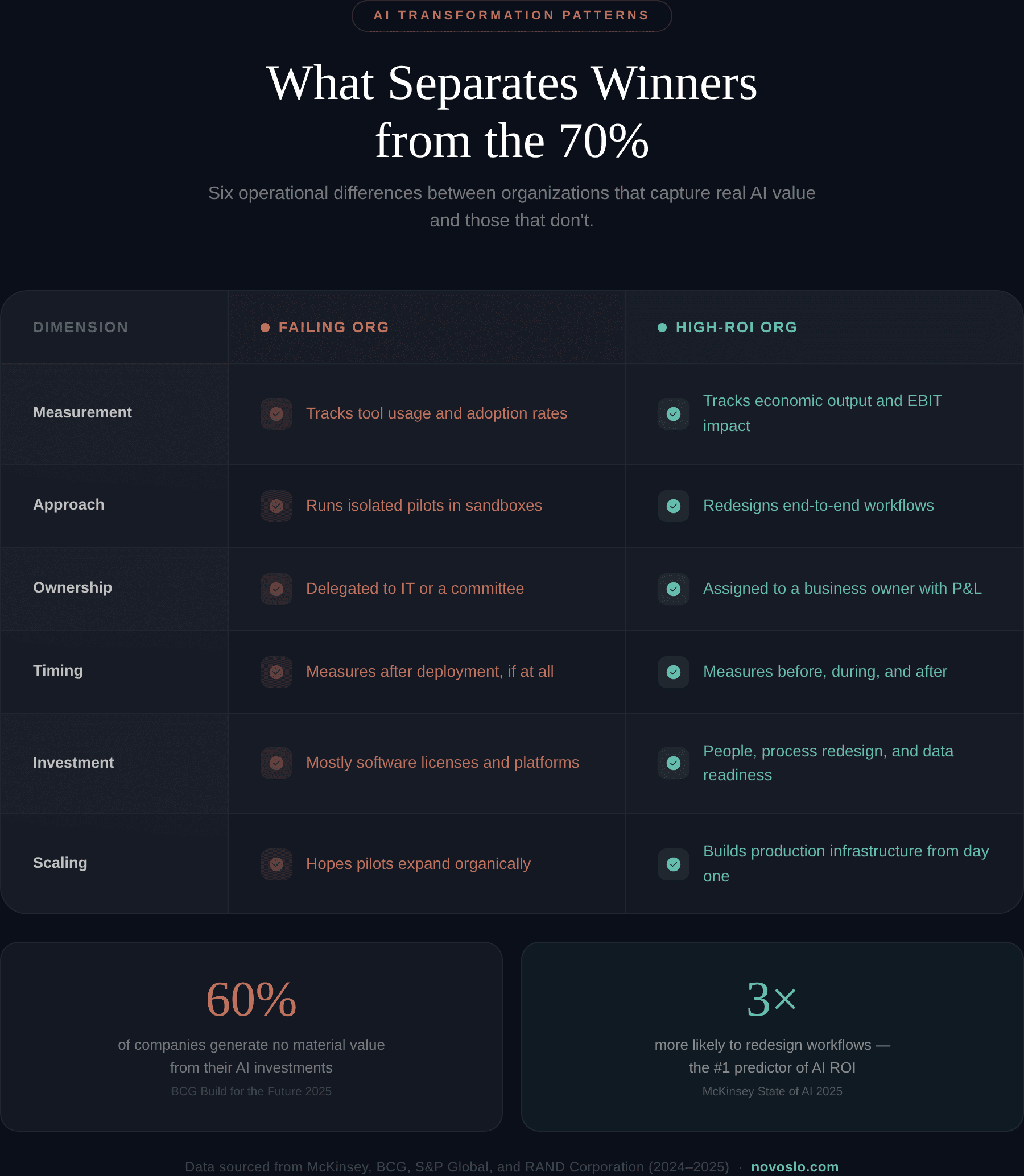

McKinsey and BCG data from 2025 paints a clear picture of what separates the 5–6% of organizations seeing real AI value from the rest.

Failing Organization | High-ROI Organization | |

What they measure | Tool usage and adoption rates | Economic output and EBIT impact |

How they start | Run isolated pilots | Redesign end-to-end workflows |

Who owns it | Delegated to IT or a technical team | Assigned to a business owner with P&L accountability |

When they measure | After deployment (if at all) | Before, during, and after deployment |

What they invest in | Software licenses and platforms | People, process redesign, and data readiness |

How they scale | Hope pilots expand organically | Build production infrastructure from day one |

BCG's 2025 global study of 1,250 companies found that only about 5% create substantial AI value at scale, while 60% generate no material value from their AI investments despite meaningful spending. The differentiator is not the technology. It's whether the organization treats AI as a tool to add or a reason to redesign how work gets done.

One enterprise client we worked with doubled their sales efficiency by applying this exact approach — measuring first, identifying the specific bottleneck, redesigning the process, and then introducing AI to handle the parts it's genuinely better at.

Should You Kill Your AI Project? A Decision Checklist

Not every AI project deserves to continue. Sometimes the best decision is to stop, regroup, and restart with the right structure. If you're evaluating an existing AI initiative, work through these questions honestly:

Is adoption declining or flat after the initial rollout? If the people who are supposed to use the tool aren't using it — or stopped using it — the problem is usually integration or workflow fit, not training.

Has ROI been measured against a pre-deployment baseline? If you didn't measure before you started, you may not be able to prove value no matter how well the tool performs.

Are cost savings visible on a P&L line? Efficiency gains that don't show up in financial statements tend to disappear during the next budget review.

Is there a single business owner accountable for outcomes? If accountability is shared between IT, operations, and a steering committee, nobody owns it.

Would you reinvest in this project today, knowing what you know now? This is the most honest question on the list. If the answer is no, continuing is a sunk cost decision.

Is there a clear path from pilot to production? If the project has been in proof-of-concept mode for more than six months without a production timeline, it's likely stuck.

If you answered "no" to three or more of these, the project probably needs to be restructured or shut down. That's not a failure — it's a correction. The real failure is continuing to fund something that isn't producing results because nobody wants to be the person who pulls the plug.

Where to Go from Here

The 70% failure rate in AI transformations isn't inevitable. It's the result of predictable, avoidable mistakes: starting without measurement, buying tools before defining problems, skipping process redesign, leaving ownership unclear, and staying in pilot mode too long.

The A.I.R.E. framework — Alignment, Integration, Redesign, Economics gives organizations a structure to avoid each of these. It's not complicated. But it does require discipline and a willingness to do the unglamorous work before the exciting work.

If your organization is planning an AI initiative or trying to figure out why an existing one isn't delivering, it might be worth having a conversation about where the gaps are. An AI transformation partner can help identify whether the issue is strategic, operational, or technical and what the right next step actually looks like.

We're always happy to talk through it. No pitch, no pressure. Just a conversation about what's actually going on inside the business and whether AI is the right move or the right move right now.